TL;DR

- Explain what it means to find all URLs on a domain.

- Show how to crawl a website to extract every link.

- Use tools or custom code (like Python) to automate URL extraction.

- Demonstrate collecting internal links systematically.

- Describe storing the found URLs for SEO, auditing, or analysis.

Finding all the URLs on a domain sounds simple until you actually try it. Google’s site: search misses pages. Sitemaps skip no-indexed content. Manual crawling breaks on large sites. And if the website renders pages via JavaScript, most tools won’t even see them.

Whether you’re auditing your own site before a migration, mapping a competitor’s content structure, hunting for broken links, or building a scraper that needs every page URL, this is one of the most common (and most misunderstood) tasks in web scraping and SEO.

In this guide, we’ll walk through multiple methods to find all URLs on a domain, from zero-code approaches you can run in 30 seconds to Python scripts that scale to thousands of pages. For each method, we’ll cover what it finds, what it misses, and when to use it.

Let’s get into it.

Find All URLs On A Domain’s Website

Before jumping into the methods, it’s worth knowing the most common reasons people do this, because your goal will determine which method makes the most sense.

SEO auditing — find orphan pages, check for duplicate content, spot pages that shouldn’t be indexed, or verify that all your important URLs are actually crawlable.

Broken link detection — crawl every URL on your domain and check their status codes. A full URL list is the starting point for any broken link audit.

Site migration — before moving to a new domain or CMS, you need a complete inventory of every page so nothing gets left behind or loses its redirect.

Competitive research — map out a competitor’s content structure to find topics they’re covering that you aren’t.

Web scraping — if you want to scrape data from every page on a site, you first need a list of every page to scrape.

Content audits — identify thin pages, outdated posts, or content gaps across your entire site.

Now, let’s discuss all the techniques in brief.

Google search technique

Google Search is the fastest zero-setup method; just enter a search query to find indexed pages on any domain. The catch: Google excludes pages for reasons like duplicate content or noindex tags, so results are never a complete picture.

Sitemaps and robots.txt

Sitemaps and robots.txt give you a more structured view of a site’s pages than Google search, but they come with their own blind spots. A domain can have multiple sitemaps, some pages may be 302 redirecting at the time you check, and SEO plugins like RankMath or Yoast automatically drop non-canonical pages from the sitemap entirely. So if Page A has a canonical pointing to Page B, Page A simply won’t appear.

SEO crawling tools

SEO crawling tools are the most straightforward option — no code, detailed output, and easy to use. The tradeoff is cost; most tools limit free usage to 500 pages, which works fine for smaller sites but requires a paid plan once you scale.

Scraping

If you’re comfortable with coding, a custom scraper gives you the most control: filter by URL pattern, exclude directories, handle pagination, or integrate directly into your pipeline. It takes more setup, but for large or complex sites, it’s often the only method that gets you everything you need.

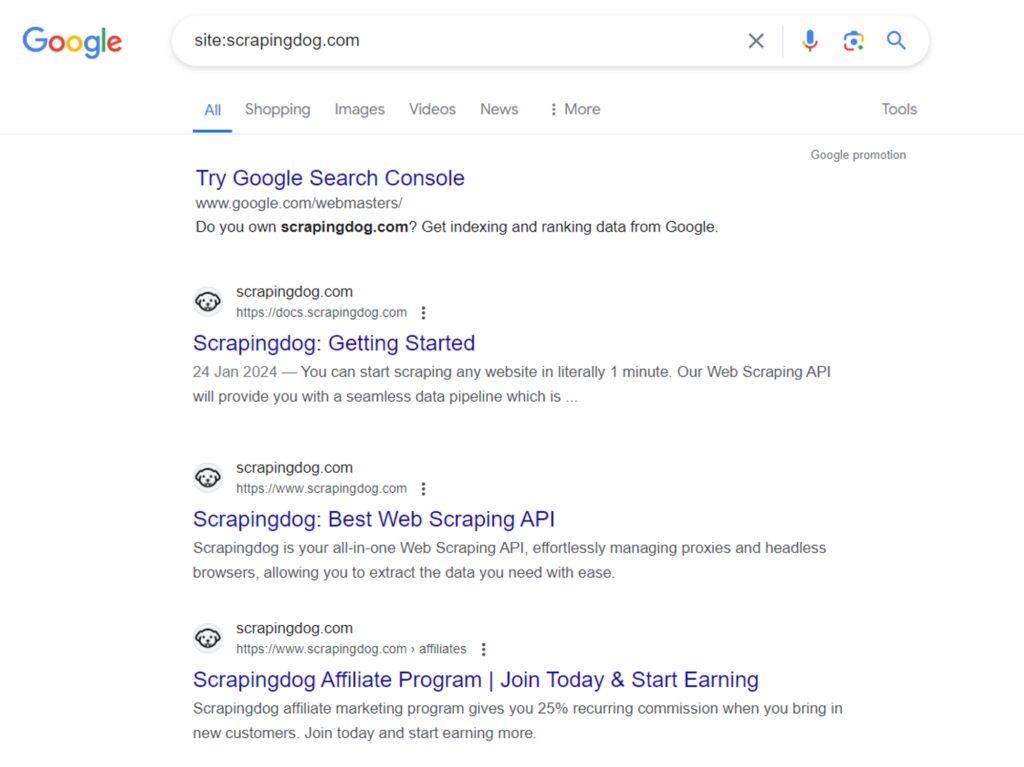

Through Google Site Search

One of the quickest ways to find URLs on a website is with Google’s site search feature. Here’s how to use it:

- Go to Google.com

- In the search bar, type

site:example.com(replaceexample.comwith the website you want to search). - Hit enter to see the list of indexed pages for that website.

Let’s find all the pages on scrapingdog.com. Search for site:scrapingdog.com on Google and hit enter.

Google will return a list of indexed pages for a specific domain.

However, as discussed earlier, Google may exclude certain pages for reasons such as duplicate content, low-quality, or inaccessible pages.

So, the “site:” search query is best for getting rough estimates, but it may not be the most accurate measure.

If you want to do it programatically then you can take help of the Google Search API and Python to extract all the links without manually checking each page on google.

Through Sitemaps and robots.txt

Sitemaps and robots.txt are two files that almost every website exposes publicly, and together they can give you a near-complete picture of a site’s URL structure. Unlike Google search, you’re not dependent on what Google has chosen to index; you’re looking directly at what the site owner has declared. Here’s how to use each one.

Using sitemaps

A sitemap is an XML file listing all important website pages for search engine indexing. Webmasters use it to help search engines understand the website’s structure and content for better indexing.

Every decent website has a sitemap as it improves Google rankings and is considered a good SEO practice. To learn how to create and optimize one effectively, read the article for practical tips.

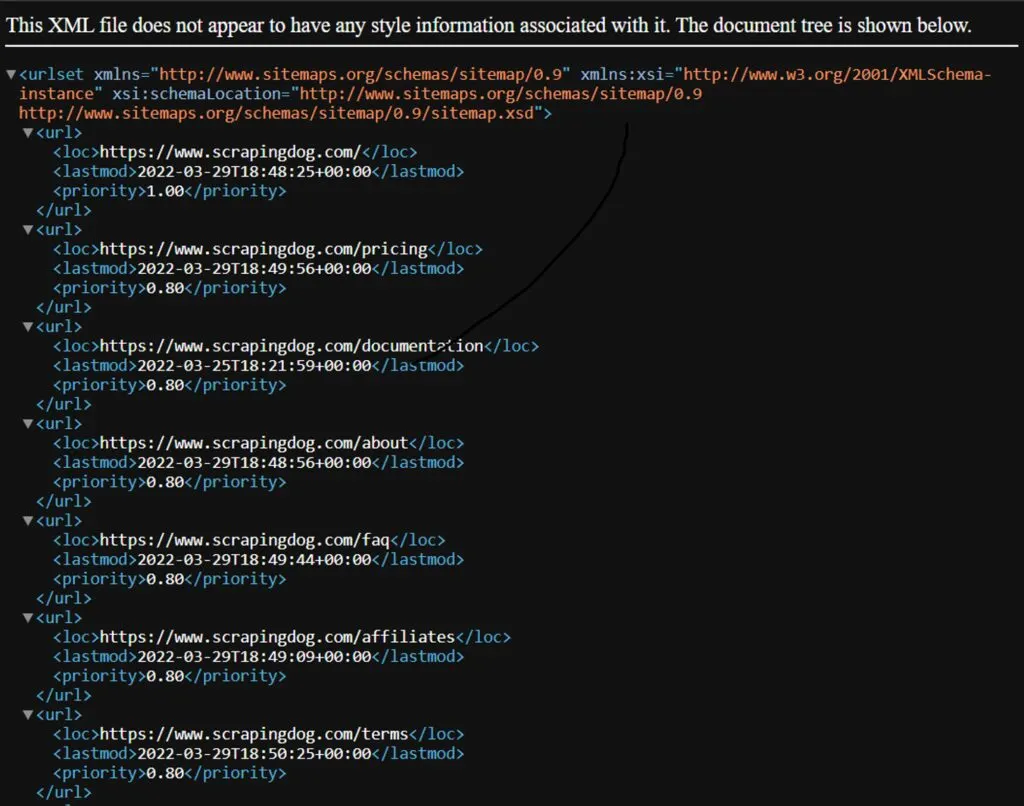

Here’s what a standard sitemap looks like:

The <loc> element specifies the page URL, <lastmod> indicates the last modification time, and <priority> signifies the relative importance for search engines (higher priority means more frequent crawling).

Now, where to find a sitemap? Check for /sitemap.xml on the website (e.g., https://scrapingdog.com/sitemap.xml)

Websites can have multiple sitemaps in various locations, including: /sitemap.xml.gz, /sitemap_index.xml, /sitemap_index.xml.gz, /sitemap.php, /sitemapindex.xml, /sitemap.gz.

Most websites mention the number of sitemaps they have under the domain in the robots.txt file, which we are going to discuss next.

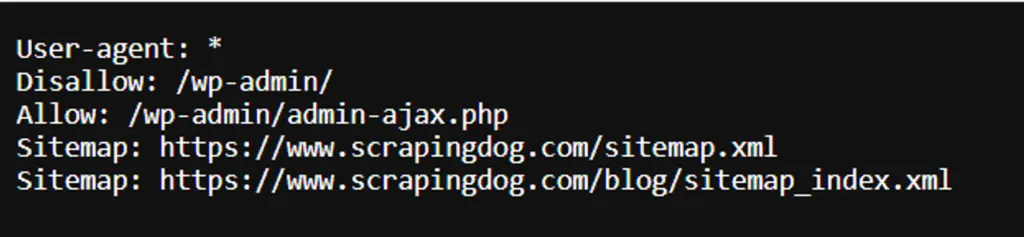

Using robots.txt

The robots.txt file instructs search engine crawlers on which pages to index and which ones to exclude from indexing. It can also specify the location of the website’s sitemap. The file is often located at the /robots.txt path (e.g., https://scrapingdog.com/robots.txt).

Here’s an example of a robots.txt file. Some routes are disallowed for indexing. The sitemap location is also present.

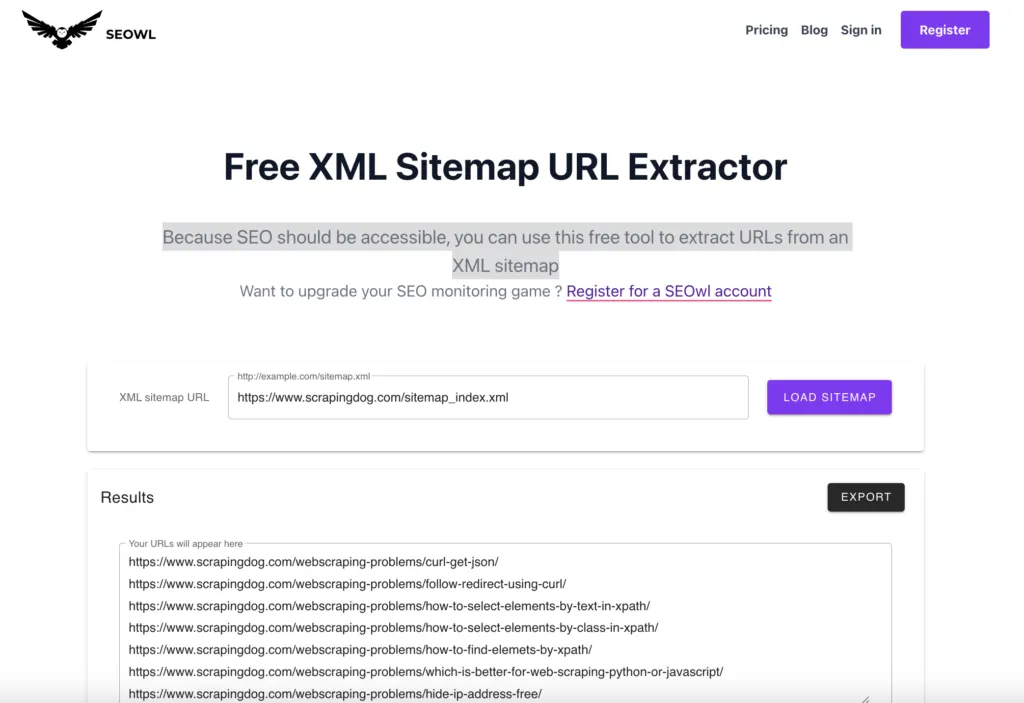

You need to visit both sitemaps and find all the URLs within the website. Note that, for smaller sitemaps, you can manually copy the URLs from each tag. But for larger sitemaps, consider using an online tool to convert the XML format to a more manageable format, such as CSV. There are free tools available, like the one Seowl sitemap extractor.

Through SEO Crawling Tools

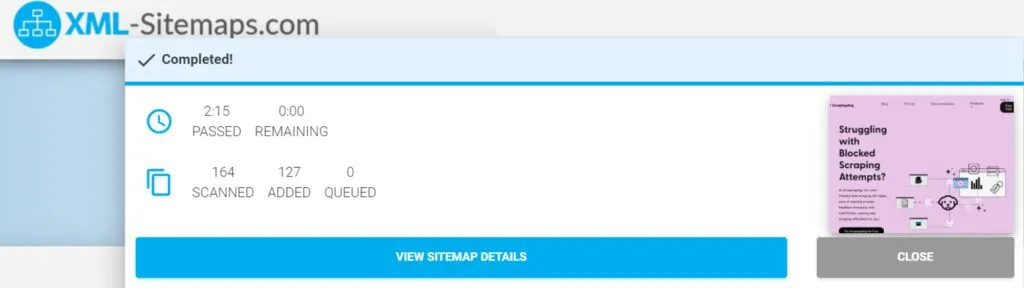

Now let’s see how SEO crawling tools help us find all website pages. There are various SEO crawlers in the market, we’ll explore the free tool XML-Sitemaps.com. Enter your URL and click “START” to create a sitemap. This tool is suitable when you need to quickly create a sitemap for a small website (up to 500) pages.

The process will start and you will see the number of pages scanned (167 in this case) and the number of pages indexed (127 in this case). This indicates that only around 127 of the scanned pages are currently indexed in Google Search.

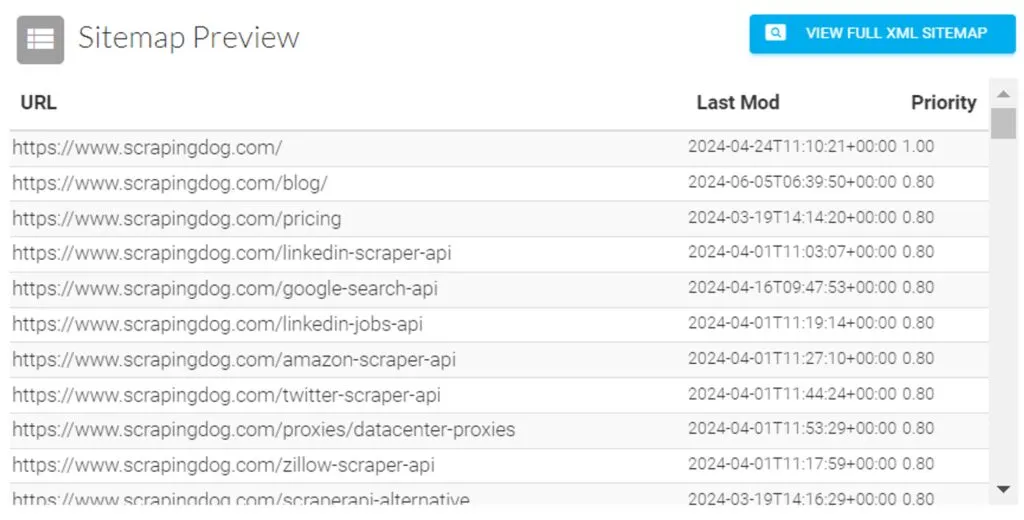

Once the crawling process is complete, the sitemap preview will display all the website’s indexed URLs, including the last modification date and time, as well as the priority of each URL.

You can download the XML sitemap file or receive it via email and put it on your website afterward.

Screamingfrog is another free tool that can help you with exporting URLs from a domain. Keep in mind that the free version allows you to get 500 URLs from a single domain. To use it, you have to download the software on your machine & unlike SaaS, it will crawl the domain from your setup only.

Through Web Scraping

If you’re a developer, you can build your crawler script to find all URLs on a website. Here, you can take advantage of web scraping APIs like Scrapingdog.

This method offers more flexibility and control over crawling compared to previous methods. It allows you to customize behavior, handle dynamic content, and extract URLs based on specific patterns or criteria.

You can use any language for web crawling, such as Python, JavaScript, or Golang. In this example, we will use Python. Also, sign up for the trial Scrapingdog pack.

You can export the list and keep it in CSV inside a folder.

Using Python and Scrapingdog

Before writing the code, make sure you have installed the necessary libraries.

Install the libraries using pip:

pip install beautifulsoup4 requests

Next, let’s create a Python file (I am naming the file as main.py) and write the code.

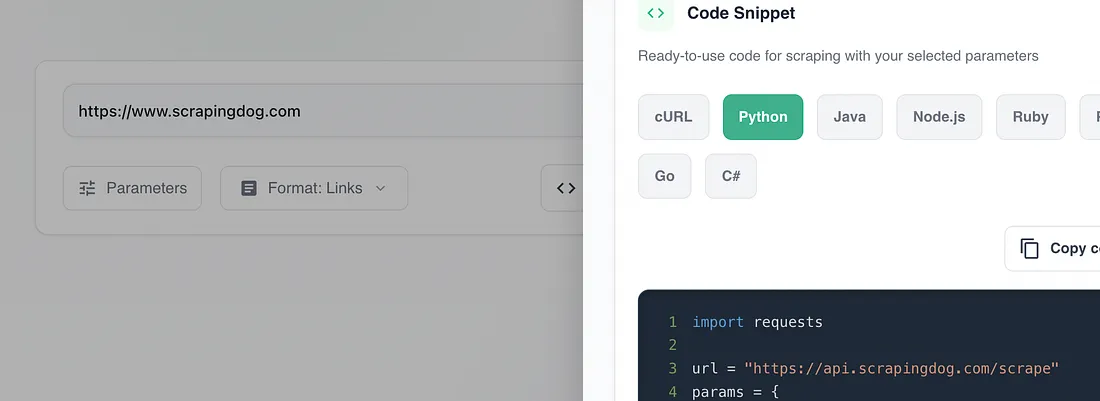

If you paste your target website in the Scrapingdog scraper, you will get a ready to use python code.

Just copy this code and paste it into the main.py file. I have used format as links, this ensures you get output as links only and not the whole HTML data.

import requests

url = "https://api.scrapingdog.com/scrape"

params = {

"api_key": "your-api-key",

"url": "https://www.scrapingdog.com",

"dynamic": "false",

"formats": "links"

}

response = requests.get(url, params=params)

print(response.text)

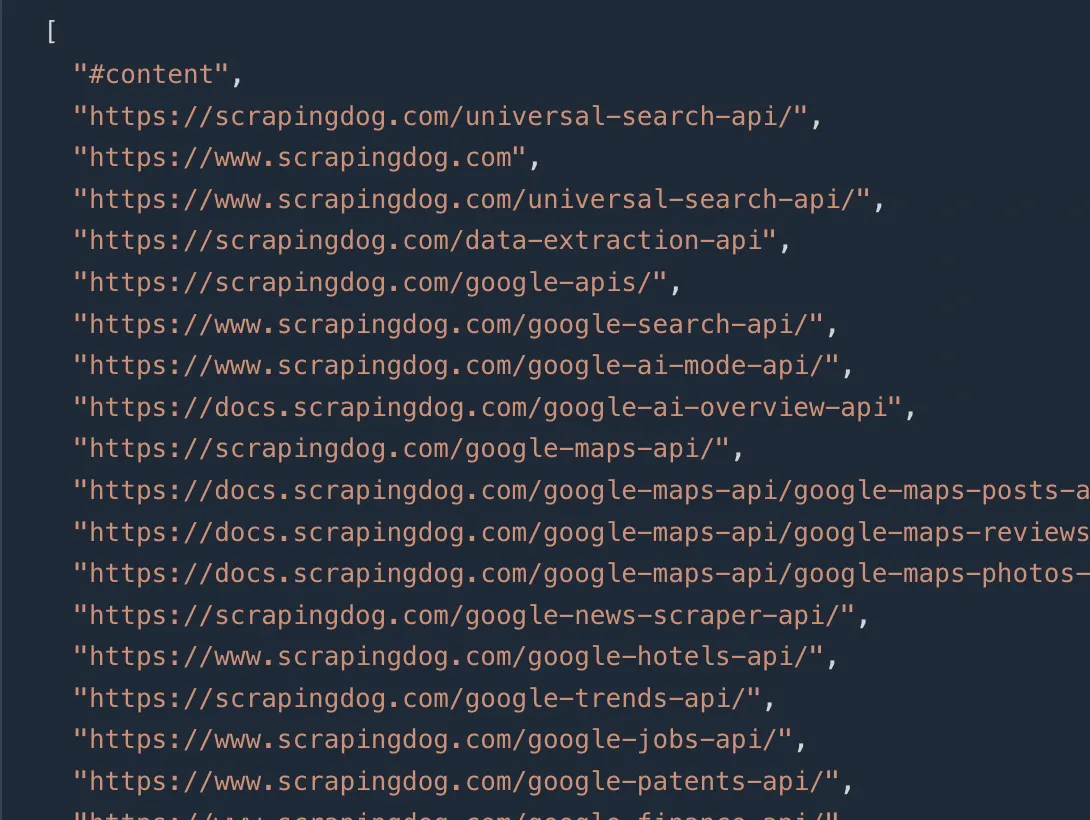

Once you run this code, you will get this JSON response with all the links available on this page.

Now, you can continue making these GET requests to all these URLs using Scrapingdog to extract all the links on that particular domain.

Now, if you want to store all these URLs in a CSV file, you can use Python’s pandas library.

import requests

import pandas as pd

url = "https://api.scrapingdog.com/scrape"

params = {

"api_key": "your-api-key",

"url": "https://www.scrapingdog.com",

"dynamic": "false",

"formats": "links"

}

response = requests.get(url, params=params)

if response.ok:

data = response.json()

links = data.get("links", [])

df = pd.DataFrame(links, columns=["URL"])

df.to_csv("scrapingdog_links.csv", index=False)

print(f"Saved {len(df)} links to scrapingdog_links.csv")

else:

print(f"Request failed with status code: {response.status_code}")

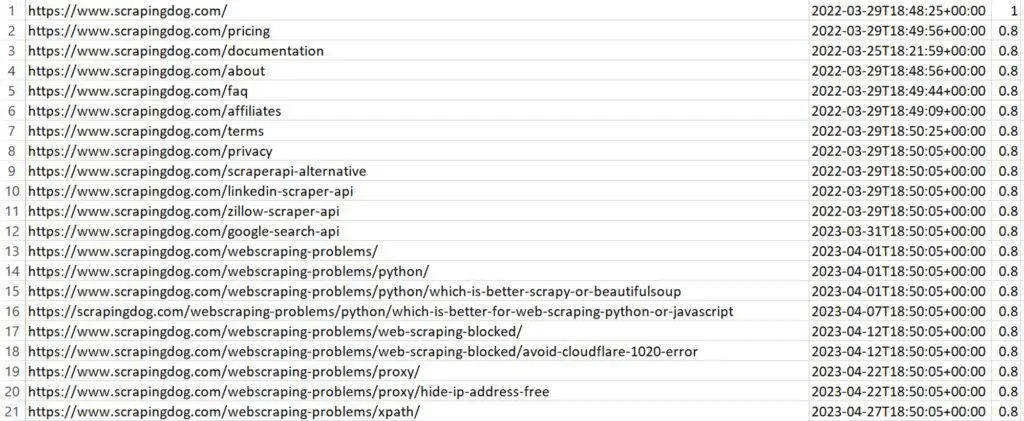

The crawled URLs will be stored in a CSV file, as shown below:

You can read our guide web scraping with python to get more idea on how a scraper can be built using Python.

Which Method Should You Use?

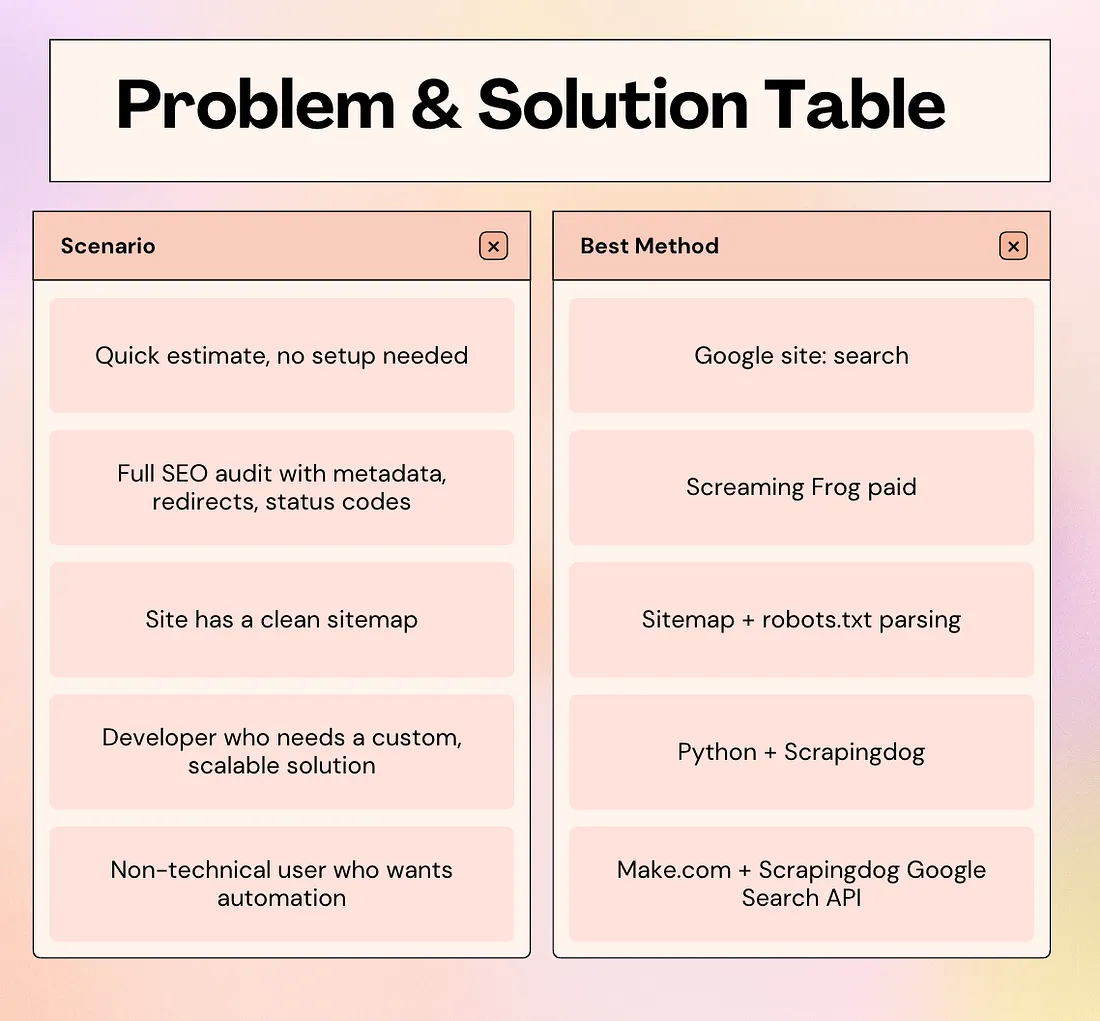

With four methods on the table, the right choice comes down to your goal, technical comfort, and the size of the site you’re working with. Here’s a quick reference:

Key Takeaways

- No single method finds every URL on a domain. For a complete picture, always combine at least two methods.

- Google’s site: operator is the fastest starting point but should only be used for rough estimates — it regularly misses pages due to noindex tags, duplicate content filters, and indexing delays.

- Screaming Frog is the best free option for a full SEO crawl, but caps at 500 URLs on the free plan. For larger sites, use a Python crawler or Scrapingdog’s API.

- JavaScript-heavy sites require a rendering-capable tool. Standard HTTP requests won’t execute JavaScript, so use Scrapingdog’s API to get fully rendered HTML before parsing links.

Conclusion

Finding all the URLs on a domain is one of those tasks that looks straightforward on the surface but has more nuance than most people expect. Different methods find different pages, and no single approach gives you the full picture every time.

The good news is you don’t need to pick just one. Start with a sitemap for the declared URLs, layer in a crawler for anything that was missed, and fall back to Scrapingdog’s API when JavaScript rendering gets in the way.

Whether you’re running an SEO audit, prepping for a site migration, or building a scraper, a clean, complete URL list is always the starting point. Now you have six ways to build one.

If you want to skip the setup entirely and start extracting URLs right away, try Scrapingdog for free with 1000 credits, no credit card required.