TL;DR

- Scrape Google Maps data (name, address, phone, reviews, photos, posts) using Scrapingdog APIs; works via dashboard or Python; includes 1,000 free credits.

- APIs: Search (listings + data_id), Reviews, Places, Photos, Posts.

- Flow: Search → get data_id → pull reviews/photos/posts → use place_id for full details.

- Stack: Python (requests + pandas) → export to CSV.

- Use cases: lead generation, sentiment analysis, competitor tracking; API handles proxies + blocking.

Google Maps holds a goldmine of business data, phone numbers, addresses, ratings, reviews, photos, operating hours, and even the posts businesses publish to attract customers. If you’re building a lead generation pipeline, running competitor analysis, or doing market research, this data can give you a serious edge.

The problem is that collecting it manually at any meaningful scale is simply not practical.

In this guide, we’ll show you how to scrape Google Maps data using Python and Scrapingdog’s Google Maps API suite. We’ll cover all 5 APIs: Google Maps Search, Google Reviews, Google Places, Google Photos, and Google Posts with working Python code for each, so you can extract exactly the data your use case needs.

What Data Can You Scrape from Google Maps?

Google Maps exposes a surprising amount of structured data for every business listing. Here’s everything you can extract using Scrapingdog’s APIs:

Basic Business Info

- Business name, address, and phone number

- Website URL

- Geographic coordinates (latitude & longitude)

- Business category (e.g. “Coffee Shop”, “Hardware Store”)

Ratings & Reviews

- Overall star rating

- Total review count

- Individual reviews — text, date, star rating, reviewer name

- Star-by-star breakdown (how many 1-star, 2-star reviews, etc.)

- Whether the reviewer is a Google Local Guide

Operational Details

- Opening hours for each day of the week

- Whether the business is currently open

- Service options — dine-in, takeout, delivery

- Amenities — Wi-Fi, parking, wheelchair access

- Payment methods accepted

Visual & Social Data

- All photos — both owner-uploaded and user-contributed, organized by category (exterior, interior, food, etc.)

- Business posts — promotional updates, announcements, and offers that businesses publish on their Maps listing

Unique Identifiers

data_id— used to fetch reviews, photos, and posts for a specific businessplace_id— used to pull a full place profile

Where Can You Use this Data?

- You can use this data to generate leads. With every listing, you will get a phone number to reach out to.

- Reviews can be used for sentiment analysis of any place.

- Posts shared by the company can be used for real-estate mapping.

- Competitor analysis by scraping data like reviews and ratings of your competitor.

- Enhancing existing databases with real-time information from Google Maps.

Setting Up the Environment

- I am assuming you have already installed Python on your machine if not, then you can install it from here. Now, create a folder where you will keep your Python script. I am naming the folder as

maps.

mkdir maps

pip install requests

pip install pandas

requests will be used for making an HTTP connection with the data source and pandas will be used for storing the extracted data inside a CSV file.

Once this is done, sign up for Scrapingdog’s trial pack.

The trial pack comes with 1000 free credits, which is enough for testing the API. We now have all the ingredients to prepare the Google Maps scraper.

Let’s dive in!!

Scraping Google Maps Business Listings

Let’s say I am a manufacturer of hardware items like taps, showers, etc., and I am looking for leads near my New York-based factory. Now, I can use data from Google Maps to find hardware shops near me. With the Google Maps API, I can extract their phone numbers and then reach out.

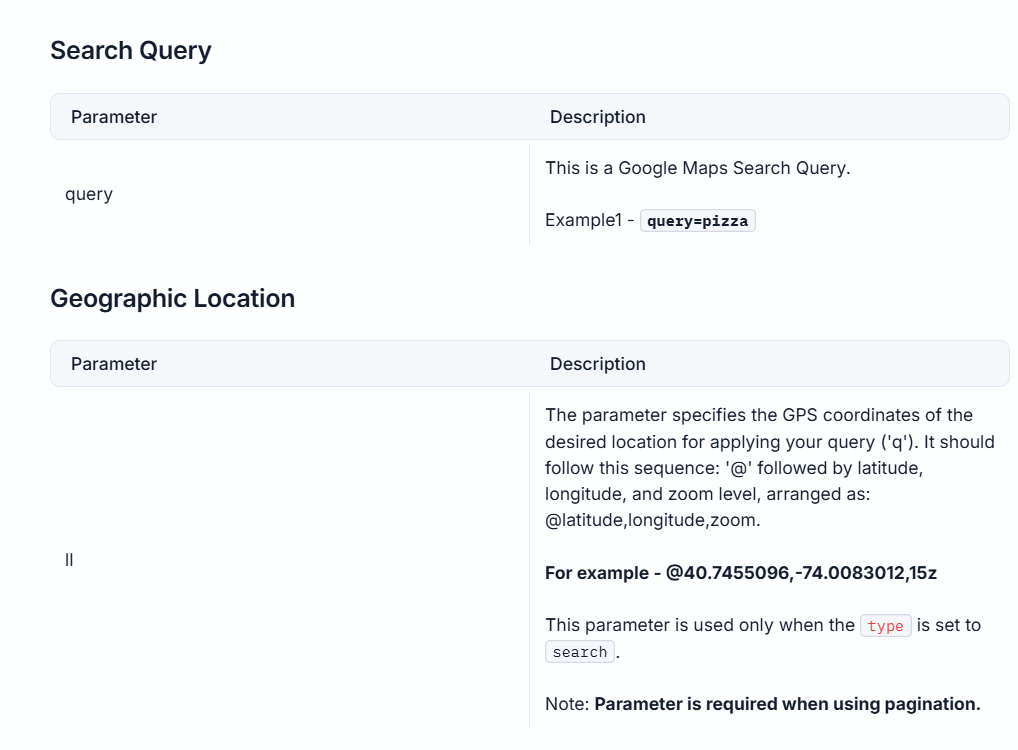

If you look at the Google Maps API documentation, you’ll notice that it requires two mandatory arguments in addition to your secret API key- coordinates and a query. (see the image below)

The coordinates represent New York’s latitude and longitude, which you can easily extract from the Google Maps URL for the location. The query will be “hardware shops near me.”

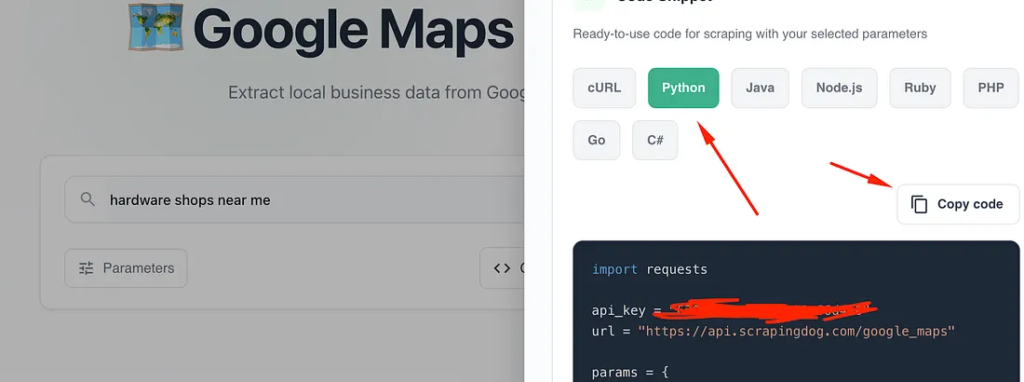

With Scrapingdog, you don’t even need to write code, you can simply enter the relevant values in the scraper and copy the ready-to-use code directly from the dashboard.

import requests

api_key = "your-api-key"

url = "https://api.scrapingdog.com/google_maps"

params = {

"api_key": api_key,

"query": "hardware shops near me",

"ll": "@40.6974881,-73.979681,10z",

"language": "en"

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Request failed with status code: {response.status_code}")

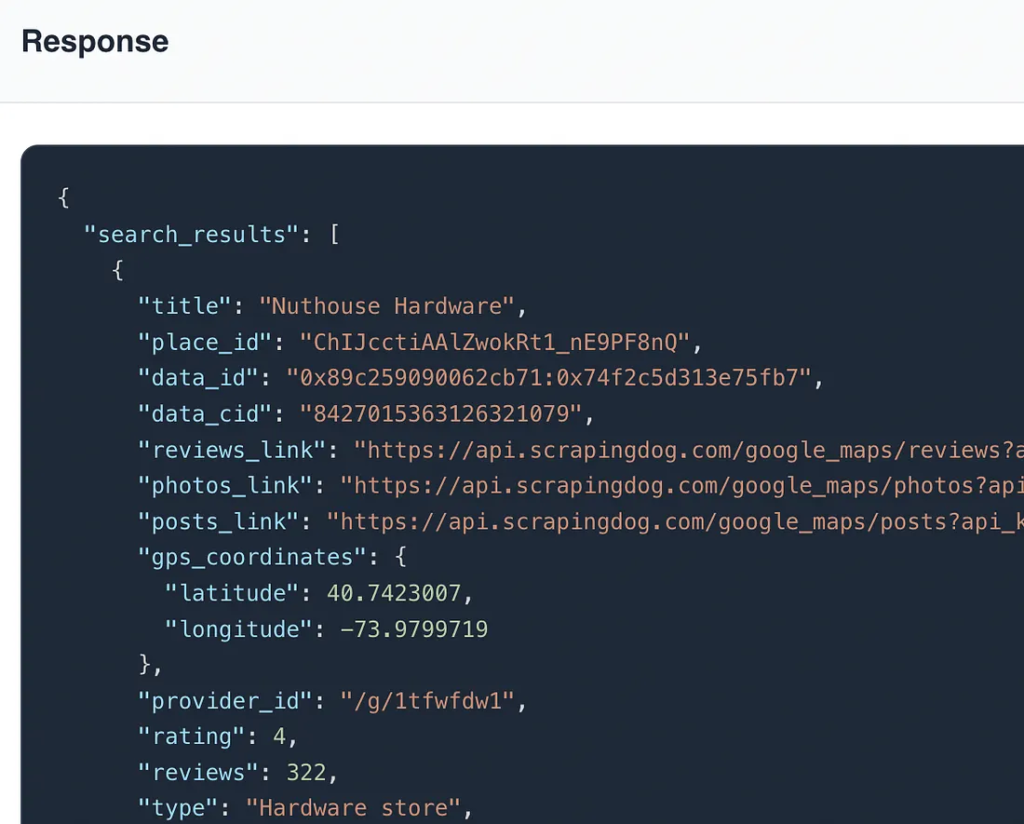

Once you run this code you will get clean JSON response from the API.

As you can see in the above response, you got a beautiful JSON response that shows every little detail, like operating hours, title, phone number, etc.

Also, you can watch the video below, in which I have quickly explained how you can use Scrapingdog Google Maps API.

We can code this in Python and save the data directly to a CSV file. Here, we will use Pandas library.

import requests

import pandas as pd

api_key = "your-api-key"

url = "https://api.scrapingdog.com/google_maps"

obj={}

l=[]

params = {

"api_key": api_key,

"query": "hardware shops near me",

"ll": "@40.6974881,-73.979681,10z",

"page": 0

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

for i in range(0,len(data['search_results'])):

obj['Title']=data['search_results'][i]['title']

obj['Address']=data['search_results'][i]['address']

obj['DataId']=data['search_results'][i]['data_id']

obj['PhoneNumber']=data['search_results'][i]['phone']

l.append(obj)

obj={}

df = pd.DataFrame(l)

df.to_csv('leads.csv', index=False, encoding='utf-8')

else:

print(f"Request failed with status code: {response.status_code}")

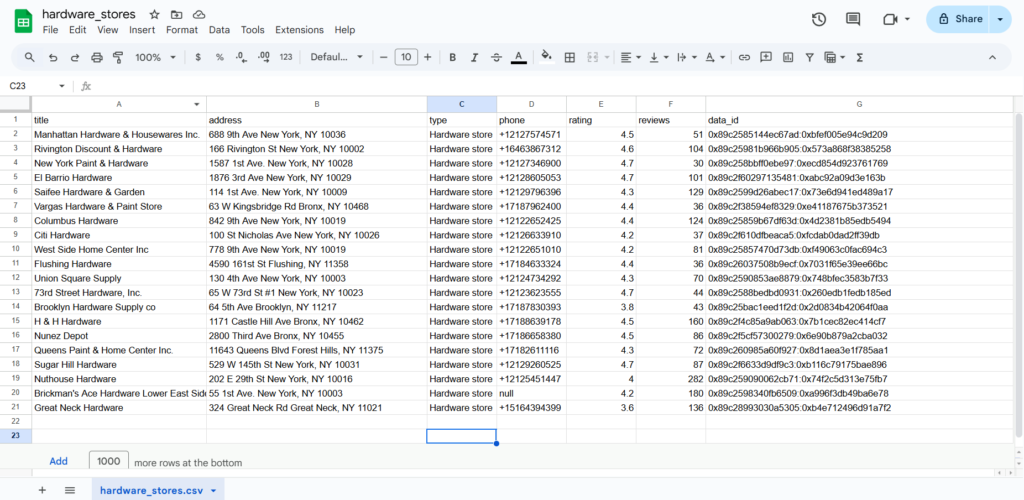

Once we got the results, we iterated over the JSON response. Inside the loop, for each result, it extracts the title, address, and phone number and stores them in a dictionary obj.

After the loop finishes, the list l, which now contains all the extracted data, is converted into a Pandas DataFrame.

Finally, the DataFrame is saved to a CSV file named leads.csv. It also ensures that the file doesn’t include row numbers (index) and encodes the file in UTF-8.

Once you run this code, a leads.csv file will be created inside the folder maps.

This file now contains all the phone numbers and the addresses of the hardware shops. Now, I can call them and convert them into clients.

If you are not a developer and want to learn how to pull this data into Google Sheets, I have built a guide on scraping websites using Google Sheets, Using this guide, you will understand how to pull data from any API to a Google sheet.

This file now contains all the phone numbers and some related information about the hardware shops. Now, I can call them and convert them into clients.

Scraping Google Maps Reviews

So, after getting the list of nearby hardware shops, what if you want to know what their customers are saying about them? This is the best way to determine whether a business is genuinely thriving.

For this task, we will use the Google Reviews API to retrieve details about when and what rating a customer has given after receiving a service from a particular shop.

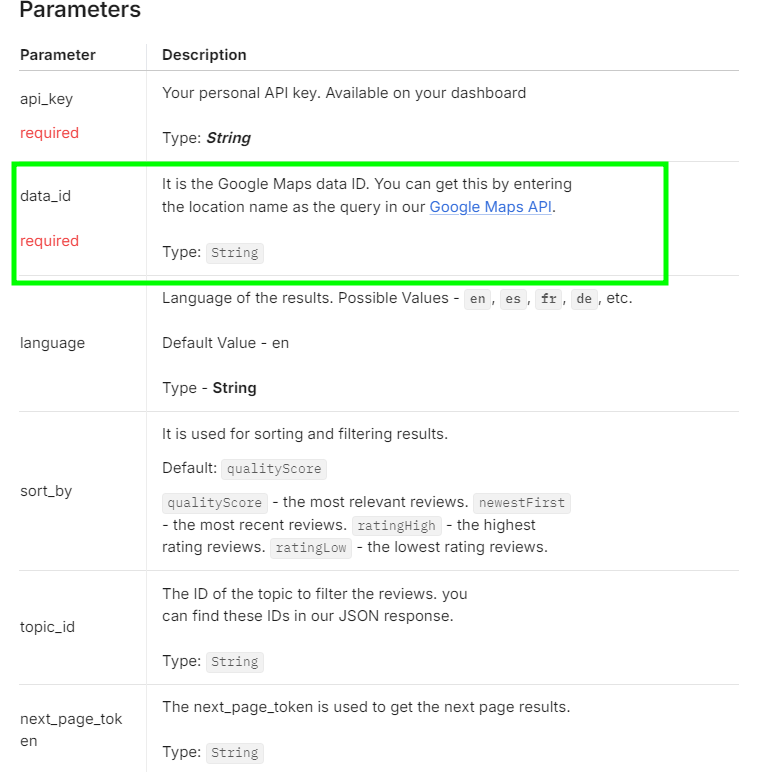

Extracting reviews with this API is straightforward. Before proceeding, be sure to refer to the API documentation.

While reading the docs, you will see that an argument by the name data_id is required. (see below⬇️)

This is a unique ID assigned to each place on Google Maps. Since we have already extracted the Data ID from our previous code while gathering listings, there is no need to manually search for the place on Google Maps to obtain its ID.

I am going to use this Data ID: 0x89c2585144ec67ad:0xbfef005e94c9d209 for a business named “Maps” located at 688 9th Ave, New York, NY 10036.

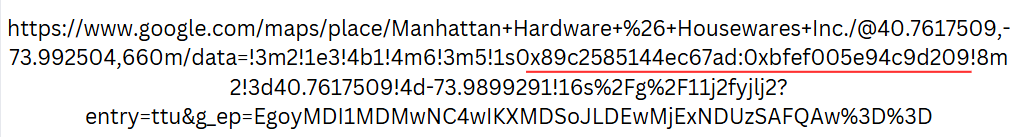

However, if you want to extract the Data ID manually, you can do so by searching for the business on Google Maps. After searching, you will find the Data ID in the URL, as shown here:

In this URL, you will see a string starting with 0x89 and ending with ‘!’. Like in the above URL, it is 0x89c2585144ec67ad:0xbfef005e94c9d209, and yes, you have to consider everything before ‘!’.

Marked in the image below ⬇️ is what I am referring to.

Let’s code this in Python and extract the reviews.

import requests

api_key = "your-api-key"

url = "https://api.scrapingdog.com/google_maps/reviews"

params = {

"api_key": api_key,

"data_id": "0x89c2585144ec67ad:0xbfef005e94c9d209"

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Request failed with status code: {response.status_code}")

Once you run this code you will get this beautiful JSON response.

[

{

"link": "https://www.google.com/maps/reviews/data=!4m8!14m7!1m6!2m5!1sChdDSUhNMG9nS0VJQ0FnSURyLU9QSXlnRRAB!2m1!1s0x0:0xbfef005e94c9d209!3m1!1s2@1:CIHM0ogKEICAgIDr-OPIygE|CgwIhv3PtAYQsPaQxAM|?hl=en-US",

"rating": 5,

"date": "7 months ago",

"source": "Google",

"review_id": "ChdDSUhNMG9nS0VJQ0FnSURyLU9QSXlnRRAB",

"user": {

"name": "Tony Westbrook",

"link": "https://www.google.com/maps/contrib/111872779354186950334?hl=en-US&ved=1t:31294&ictx=111",

"contributor_id": 111872779354186960000,

"thumbnail": "https://lh3.googleusercontent.com/a-/ALV-UjWfJlalEYAG74ndEEFN_o17j8S1H1toiSIe7HJtvky9yQoI1lHU0w=s40-c-rp-mo-br100",

"local_guide": false,

"reviews": 33,

"photos": 28

},

"snippet": "I have been looking all over for a flyswatter. I walked right in and there was one and it was even blue my favorite color. They also had quite a selection. I also needed some fly ribbon. They had a nice selection. Great prices. Very helpful. Well stocked! Friendly.",

"details": {},

"likes": 0,

"response": {

"date": "7 months ago",

"snippet": ""

}

},

{

"link": "https://www.google.com/maps/reviews/data=!4m8!14m7!1m6!2m5!1sChZDSUhNMG9nS0VJQ0FnSURRNlpiTWR3EAE!2m1!1s0x0:0xbfef005e94c9d209!3m1!1s2@1:CIHM0ogKEICAgIDQ6ZbMdw|CgsIttrSswUQgNjxFg|?hl=en-US",

"rating": 3,

"date": "9 years ago",

"source": "Google",

"review_id": "ChZDSUhNMG9nS0VJQ0FnSURRNlpiTWR3EAE",

"user": {

"name": "Ben Strothmann",

"link": "https://www.google.com/maps/contrib/114870865461021130065?hl=en-US&ved=1t:31294&ictx=111",

"contributor_id": 114870865461021130000,

"thumbnail": "https://lh3.googleusercontent.com/a-/ALV-UjUCNRewMdLRFVRehId-kl6Sng0n-LACg75xzr2XmsfbougA6047=s40-c-rp-mo-br100",

"local_guide": false,

"reviews": 13,

"photos": null

},

"details": {},

"likes": 2,

"response": {

"date": "",

"snippet": ""

}

},

.....

As you can see, you got the rating, review, name of the person who posted the review, etc. The total rating is positive towards this business. Rather than judging manually, you can also perform sentiment analysis to judge the behaviour or customer’s mood more accurately.

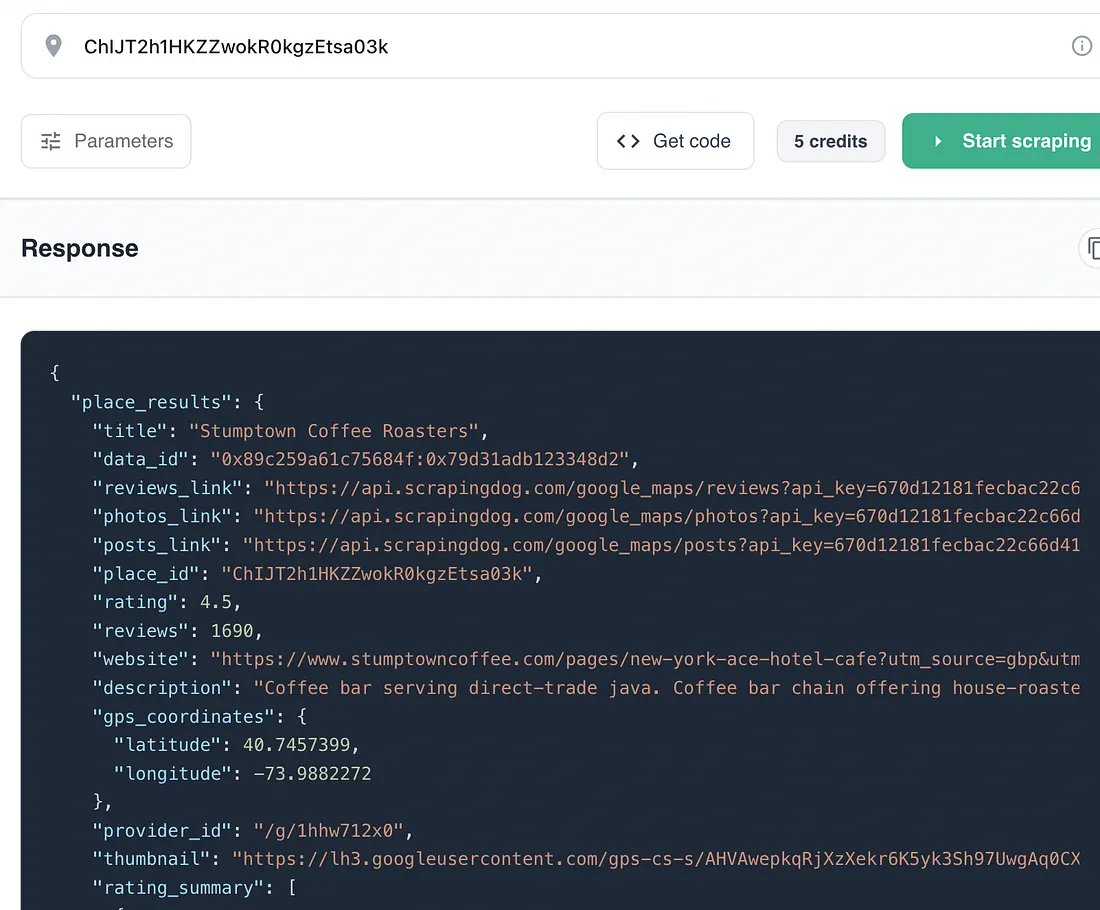

Getting Full Place Details using Google Places API

The Search API gives you a list. The Places API gives you the full picture of a single business.

Pass it a place_id and you get back everything Google Maps knows about that location from ratings broken down by star count, business categories, service options, amenities, accessibility info, payment methods to crowd type, and more. It’s the API to use when you need a complete data snapshot of a specific business rather than a broad list.

You can get the place_id in two ways either from the Google Search API response, or directly from the Google Maps URL of any business listing.

import requests

api_key = "your-api-key"

url = "https://api.scrapingdog.com/google_maps/places"

params = {

"api_key": api_key,

"place_id": "ChIJT2h1HKZZwokR0kgzEtsa03k"

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Request failed with status code: {response.status_code}")

A typical response looks like this:

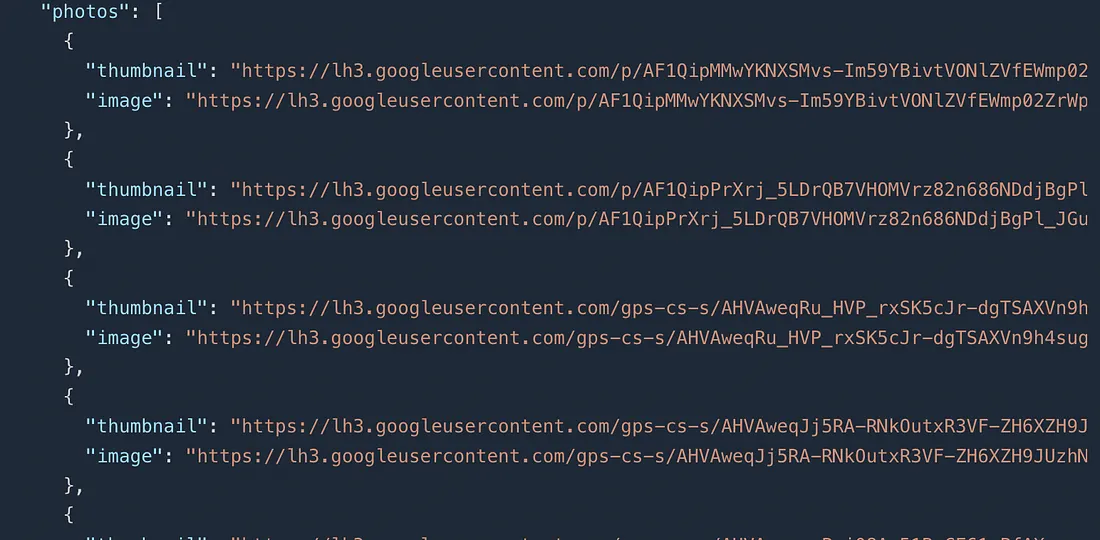

Scraping Google Maps Photos

Every Google Maps listing has a photos section, a mix of images uploaded by the business owner and photos contributed by customers. The Google Maps Photos API lets you extract all of them, organized by category, with both thumbnail and full-resolution URLs.

Practical use cases include visual competitor research, real estate documentation, brand asset collection, and building image datasets for computer vision projects.

The only required parameter beyond your API key is data_id, which you get from the Search API response.

import requests

api_key = "your-api-key"

url = "https://api.scrapingdog.com/google_maps/photos"

params = {

"api_key": api_key,

"data_id": "0x88371272500ebf33:0x70a094fd98fb45c0",

"language": "en",

"google_domain": "com"

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Request failed with status code: {response.status_code}")

Once you run this code you will get all the photos.

What if you only need photos of a business’s interior? In that case, you can use the category_id parameter.

The response includes a categories array showing available photo types for that business—like Exterior, Interior, Food, Videos, and By Owner. Simply pass the relevant category ID to the category_id parameter to filter results accordingly.

import requests

api_key = "your-api-key"

url = "https://api.scrapingdog.com/google_maps/photos"

params = {

"api_key": api_key,

"data_id": "0x88371272500ebf33:0x70a094fd98fb45c0",

"category_id": "CgIYEw==" # "Exterior" category

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

for photo in data['photos']:

print(photo['image'])

else:

print(f"Request failed with status code: {response.status_code}")

Each photo comes with both a thumbnail URL and a full-resolution image URL. For most analytical use cases the thumbnail is sufficient and much faster to download in bulk.

Scraping Business Posts from Google Maps

Businesses on Google Maps can publish posts directly to their listing, like promotions, new product announcements, seasonal offers, and event updates. Most people never look at these, which is exactly what makes them valuable for competitive intelligence.

The Posts API scrapes all of them for any business, giving you the post text, image URL, publication date, and the URL the business linked to. Run this across a list of competitors, and you can passively track their entire content and promotional strategy without ever visiting their profiles manually.

Like the Reviews and Photos APIs, the Posts API takes a data_id from the Search API response.

import requests

api_key = "your-api-key"

url = "https://api.scrapingdog.com/google_maps/posts"

params = {

"api_key": api_key,

"data_id": "0x88371272500ebf33:0x70a094fd98fb45c0"

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Request failed with status code: {response.status_code}")

A typical response for this API will look like this:

{

"post_results": {

"location_details": {

"name": "Kline Home Exteriors",

"logo": "https://lh3.googleusercontent.com/-prudi4SDf0Y/..."

},

"post_data": [

{

"description": "Upgrade Your Home's Exterior with Stonework!! Check our latest blog post to see the benefits and options with manufactured stone!!",

"date": "Aug 4, 2022",

"image": "https://lh3.googleusercontent.com/geougc/AF1Qip...",

"link": "https://www.klinehomeexteriors.com/blog/upgrade-your-homes-exterior-with-stonework"

},

{

"description": "Check out our latest blog post on tips to follow before installing new Vinyl Siding!",

"date": "Jul 7, 2022",

"image": "https://lh3.googleusercontent.com/geougc/AF1Qip...",

"link": "https://www.klinehomeexteriors.com/blog/before-installing-new-vinyl-siding"

}

],

"next_page_token": "CgwI7vvziAYQ2M6i6QIqBkVnSUlDZw=="

}

}

With a similar approach, you can collect posts of all your competitors.

Pay attention to the link field. Competitors often link their posts to specific landing pages or blog posts. This alone can tell you what products they're currently pushing and what campaigns are active.

Here are Some Key Takeaways:

data_id is the master key. Get it once from the Search API and use it to unlock reviews, photos, and posts for any business, no additional lookups needed.- Each API serves a distinct purpose. Search for discovery, Reviews for sentiment, Places for deep business profiles, Photos for visual data, and Posts for competitor content tracking.

- Pagination is built in. The Search API uses a

pageinteger; Reviews, Photos, and Posts use anext_page_token. Always implement pagination if you need complete data rather than just the first page. - Everything exports to CSV. Every example in this guide writes to a CSV using

pandas, making the data immediately usable in Excel, Google Sheets, or any downstream pipeline. - Scrapingdog handles the hard parts. Proxy rotation, anti-bot detection, and response parsing are all managed by the API. Your script stays simple regardless of how large the scrape gets.

Conclusion

Google Maps is one of the richest sources of business data on the internet, and with Scrapingdog’s API suite, extracting it with Python is straightforward.

The five APIs covered in this guide work together as a complete pipeline. Use the Search API to find businesses, grab the data_id, then pull reviews, place details, photos, and posts as needed. Each step builds on the last.

Scrapingdog’s free trial gives you 1,000 credits to test everything without a credit card. For larger workloads, the API handles proxy rotation and anti-bot detection automatically so your pipeline stays reliable at any scale.

Check out the Google Maps API documentation for the full parameter reference.

Frequently Asked Questions

Is scraping Google Maps legal?

Yes. Scraping publicly available data from Google Maps is generally considered legal. Using a dedicated API like Scrapingdog is the safer approach since it handles compliance and avoids triggering Google’s anti-bot systems.

What is a

A unique identifier assigned to every business listing on Google Maps. You get it from the Search API and use it to fetch reviews, photos, and posts for that business.

How many results does the Search API return per page?

Up to 20 results per page. Increment the page parameter starting from 0 to collect more.

Can Google Maps detect and block scrapers?

Yes. Google actively detects automated traffic and blocks scrapers that send too many requests from the same IP. Scrapingdog handles proxy rotation and request management automatically so you never hit this issue.

What is the difference between

Both uniquely identify a business but serve different APIs. Use data_id for Reviews, Photos, and Posts. Use place_id for the Places API. Both are available in the Search API response.

How do I extract Google Maps data without coding?

Scrapingdog offers a free local leads extraction tool that requires no code. Good for quick, one-off extractions.

Additional Resources

- Building Make.com Automation to Generate Local Leads from Google Maps

- Web Scraping Google Search Results using Python

- Automating Competitor Sentiment Analysis For Local Businesses using Scrapingdog’s Google Maps & Reviews API

- How to Scrape Google Local Results using Scrapingdog’s API

- Best Google Maps Scraper APIs: Tested & Ranked

- Extracting Emails by Scraping Google

- Web Scraping Google Scholar using Python

- Web Scraping Google Shopping using Python

- Web Scraping Google News using Python

- Web Scraping Google Finance using Python

- Web Scraping Google Images using Python

- Analyzing Footfall Near British Museum by Scraping Popular Times Data from Google Maps

- How to scrape Google AI Overviews using Python

- How to Scrape Google AI Mode Using Python