TL;DR

- Use Scrapingdog’s X (Twitter) Scraper with Python to pull profiles and tweets without login / proxy hassles.

- Setup: API key, Python 3,

requests; copy-ready scripts included. - Output: clean

JSON(e.g., name / followers, likes / retweets) and scales to millions. - Faster than DIY scraping; docs / resources linked, plus 1,000 free credits to try.

Twitter (now called X) is one of the most powerful platforms for real-time conversations, brand updates, and trend tracking. Whether you’re an analyst, marketer, or developer, extracting profile information can help you monitor competitors, analyze influencers, and research audience engagement.

But scraping directly from Twitter comes with rate limits, login requirements, and bot detection, which can make the process complicated and unreliable. In some regions or restricted networks, developers also rely on a VPN for X to ensure consistent access before moving to a more scalable API-based solution.

In this tutorial, we’ll use Scrapingdog’s X Scraper API and Python to easily fetch Twitter data without dealing with login flows or rotating proxies.

Now, we have everything to create a scraper for X profiles. We will scrape the profile of Sam Altman as an example for this tutorial.

import requests

api_key = '5eaa61a6e562fc52fe763tr516e4653'

profileId = 'sama'

params = {

'api_key': api_key,

'profileId': profileId

}

response = requests.get('https://api.scrapingdog.com/x/profile', params=params)

if response.status_code == 200:

# Parse the JSON response using response.json()

response_data = response.json()

# Now you can work with the response_data as a Python dictionary

print(response_data)

else:

print(f'Request failed with status code: {response.status_code}')

The code is quite simple and self-explanatory, but let me walk you through it.

- First, we imported the

requestslibrary. - Then we declared our API key(Use your key here) and the target X profile’s username. In this case, it is

sama. - Finally, we made a

GETrequest to the Scrapingdog’s X Scraper API. - After getting the response, we are printing the data.

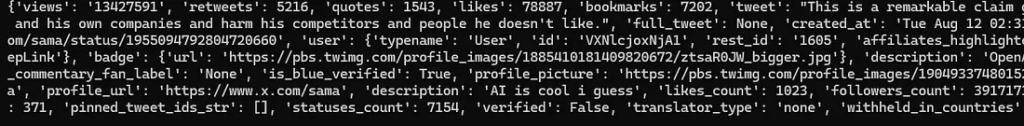

Let’s execute this code and see what data we get.

We got complete profile data from our target profile. You can scale this process to collect data from millions of profiles.

Scrape an X tweet with Python

Before we dive into scraping a tweet, please take a moment to review the Tweet Scraping API documentation. It’ll help everything make more sense.

For this tutorial, let’s scrape this tweet from Sam Altman.

import requests

api_key = "your-api-key"

url = "https://api.scrapingdog.com/x/post"

params = {

"api_key": api_key,

"tweetId": "1955094792804720660",

"parsed": "true"

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Request failed with status code: {response.status_code}")

The flow is similar to what we used while scraping the profile above, just the endpoint is different.

Once you run this code, you will get this JSON.

With this API, you can retrieve detailed metrics like the number of likes, retweets, and more at scale. You can scrape millions of tweets just like this without running into blocking issues.

Key Takeaways:

- Shows how to scrape tweets and Twitter (X) profiles using Python and a scraper API.

- Extracts structured data like tweet text, likes, retweets, replies, and user details.

- Handles proxies and anti-bot protections without manual infrastructure setup.

- Includes example Python code to send requests and parse JSON responses.

- Useful for analytics, sentiment analysis, brand monitoring, and social data workflows.

Conclusion

Scraping public Twitter data doesn’t have to be complicated. With Scrapingdog’s X Scraper API, you can skip the hassle of logins, rate limits, and JavaScript rendering and go straight to clean, structured profile data with just one API call.

Whether you’re tracking influencers, analyzing competitors, or powering your research, this approach saves time, reduces friction, and scales beautifully.

Additional Resources

Here are a few additional resources that you may find helpful during your web scraping journey: