TL;DR

- Python guide to collect Walmart product name, price, rating, description; bypass CAPTCHAs with browser-like headers and detect “Robot or human.”

- Parse with

BeautifulSoup; pull description from Next.js__NEXT_DATA__JSON. - Full script included; fine for small runs—expect blocks at scale.

- For production, use Scrapingdog’s Walmart scraper (proxies / headers / retries / headless handled); 1,000 free credits and a dashboard tester.

Walmart is the largest retailer in the US with over 400 million product listings, making it one of the richest sources of public e-commerce data on the internet.

Businesses scrape Walmart to monitor competitor pricing in real time, track inventory changes, analyze customer reviews at scale, and build price comparison tools. Whether you’re doing market research or building a data pipeline, Python gives you the fastest path to structured Walmart data.

In this guide, you’ll learn how to scrape Walmart product pages using Python and later with Scrapingdog’s Walmart Scraper API.

Why Scrape Walmart? Key Use Cases

Before we write a single line of code, it’s worth understanding what you can actually do with Walmart data:

- Price monitoring — Track price changes on specific products over time and build alerts when prices drop

- Competitor analysis — If you sell on Walmart Marketplace, scraping competitor listings helps you adjust pricing and positioning

- Market research — Analyze which products are trending, which have the most reviews, and how ratings shift

- Price comparison tools — Build apps that compare Walmart prices against Amazon or eBay in real time

- Inventory tracking — Monitor stock availability for high-demand products

How to Scrape Walmart Product Data with Python

To begin with, we will create a folder and install all the libraries we might need during the course of this tutorial.

For now, we will install two libraries

- Requests will help us to make an HTTP connection with Walmart.

- BeautifulSoup will help us to create an HTML tree for smooth data extraction.

>> mkdir walmart

>> pip install requests beautifulsoup4 pandas

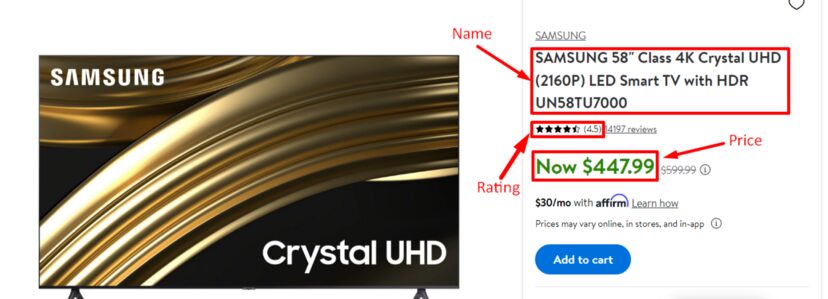

Inside this folder, you can create a python file where we will write our code. We will scrape this Walmart page. Our data of interest will be:

- Name

- Price

- Rating

- Product Details

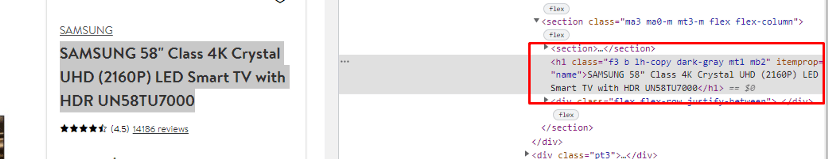

First of all, we will find the locations of these elements in the HTML code by inspecting them.

As you can see, the product name is stored in an h1 tag with itemprop="name". Now, let’s inspect where Walmart stores the price.

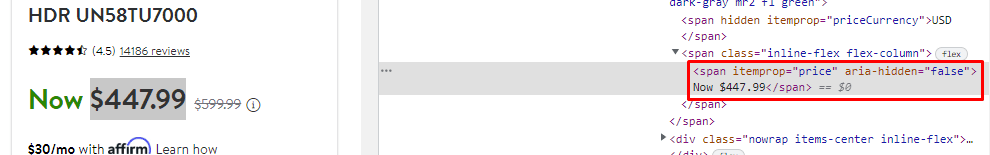

The price is stored inside a span tag with itemprop="price". Now let’s find where Walmart stores the rating.

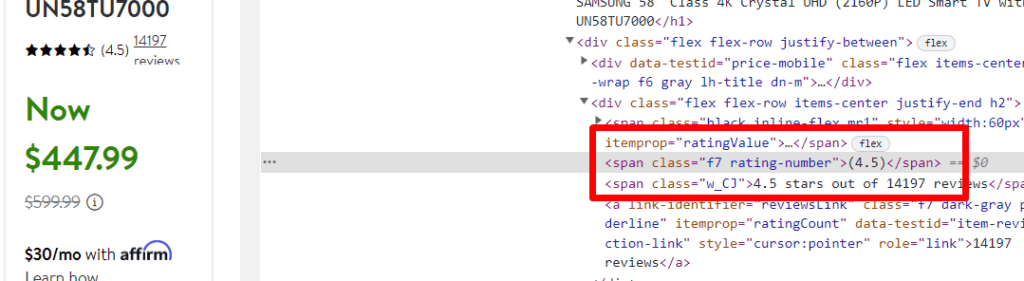

The rating is inside a span tag with the class rating-number. Finally, let’s locate the product description.

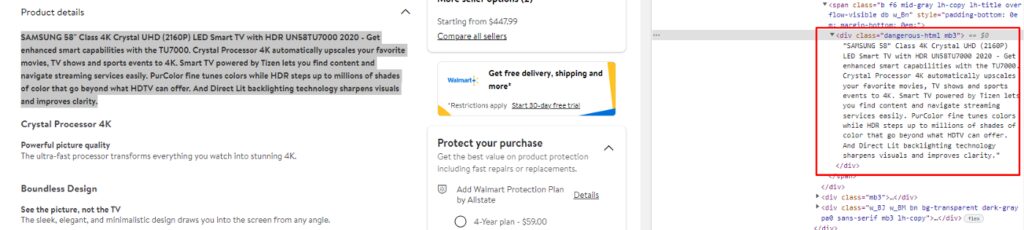

The product description sits inside a div tag with the class dangerous-html.

Now that we’ve mapped out all the elements we need, let’s start by making a basic GET request to the product page and see how Walmart responds.

We’ll start with just the requests library, no headers, no extras, just to see what Walmart returns to a raw, unauthenticated request.

import requests

from bs4 import BeautifulSoup

target_url = "https://www.walmart.com/ip/SAMSUNG-58-Class-4K-Crystal-UHD-2160P-LED-Smart-TV-with-HDR-UN58TU7000/820835173"

response = requests.get(target_url)

print(response.text)

As expected, Walmart returns a CAPTCHA instead of product data. This happens because Walmart’s servers inspect incoming requests and flag anything that doesn’t look like a real browser.

The fix is straightforward. We need to send HTTP headers that mimic a genuine browser request. The most important ones are:

User-Agent— identifies the browser and OS making the requestAccept— tells the server what content types the client can handleAccept-Language— specifies the preferred languageAccept-Encoding— indicates supported compression formatsReferer— simulates arriving from another page (e.g. Google)Host— the target domainConnection— keeps the socket open between requests

Together, these headers make your request look indistinguishable from one coming out of a real browser.

import requests

from bs4 import BeautifulSoup

target_url = "https://www.walmart.com/ip/SAMSUNG-58-Class-4K-Crystal-UHD-2160P-LED-Smart-TV-with-HDR-UN58TU7000/820835173"

accept = "text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9"

headers = {

"Accept": accept,

"Accept-Encoding": "gzip, deflate, br",

"Accept-Language": "en-US,en;q=0.9",

"Connection": "keep-alive",

"Referer": "https://www.google.com",

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36",

}

response = requests.get(target_url, headers=headers)

print(response.text)

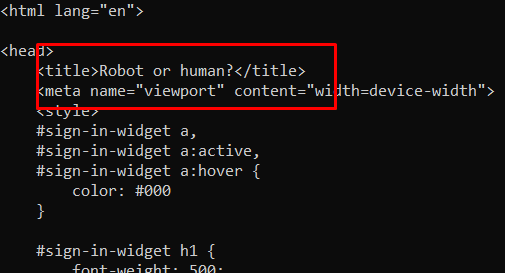

Walmart returns a 200 OK status code even when it serves a CAPTCHA page. You can’t rely on the status code alone to know if your request succeeded. Instead, check the response body for a telltale string from the CAPTCHA page.

if "Robot or human" in response.text:

print("Blocked — CAPTCHA detected")

else:

print("Success — page loaded correctly")

If the check prints ‘Blocked’, you’ll need to rotate your headers or try a different User-Agent. If it prints ‘Success’, you’re clear to proceed with extraction.

Now let’s parse the response with BeautifulSoup and extract each data point we mapped earlier.

soup = BeautifulSoup(response.text, "html.parser")

results = []

product = {}

try:

product["price"] = soup.find("span", {"itemprop": "price"}).text.replace("Now ", "").strip()

except AttributeError:

product["price"] = None

We strip out the 'Now ' prefix that Walmart sometimes prepends to prices, leaving just the numeric value. The try/except block handles cases where the element isn’t found on the page. For example, if a product is out of stock and the price isn’t rendered.

Next, let’s extract the product name and rating the same way.

try:

product["name"] = soup.find("h1", {"itemprop": "name"}).text.strip()

except AttributeError:

product["name"] = None

try:

product["rating"] = soup.find("span", {"class": "rating-number"}).text.strip("()")

except AttributeError:

product["rating"] = None

results.append(product)

print(results)

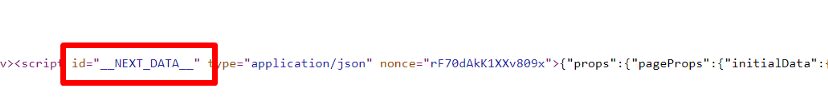

Notice that we have everything except the product description. If you search through the raw HTML returned by your script, you won’t find the dangerous-html class anywhere.

This is because Walmart is built on Next.js, which embeds its data as a JSON blob inside a <script> tag with id="__NEXT_DATA__" rather than rendering it directly in the HTML. Since this data is injected client-side after the page loads, a basic HTTP request captures it before JavaScript has a chance to run, but the JSON blob itself is still present in the raw HTML, which means we can extract it without a headless browser.

Every Next.js site follows this same pattern, so this technique works broadly beyond just Walmart.

The __NEXT_DATA__ script tag contains all the product data we need in a single structured JSON object — including fields we haven’t even explicitly scraped yet like brand, availability, and images.

import json

next_data = soup.find("script", {"id": "__NEXT_DATA__"})

json_data = json.loads(next_data.string)

print(json_data)

This is a huge JSON data which might be a little intimidating. You can use tools like JSON viewer to figure out the exact location of your desired object.

try:

obj["detail"] = jsonData['props']['pageProps']['initialData']['data']['product']['shortDescription']

except:

obj["detail"]=None

At this point we have all four data points — name, price, rating, and description, all successfully extracted.

One thing worth noting: the __NEXT_DATA__ JSON blob actually contains all the product data we scraped earlier via BeautifulSoup as well. If you explore the JSON structure using a tool like JSONViewer, you’ll find price, name, and rating all nested inside it, meaning you could skip the BeautifulSoup selectors entirely and pull everything from the JSON in one shot.

For a deeper dive into headers, sessions, and other Python scraping fundamentals, check out our Web Scraping with Python guide.

Complete Code

import json

import requests

from bs4 import BeautifulSoup

TARGET_URL = "https://www.walmart.com/ip/SAMSUNG-58-Class-4K-Crystal-UHD-2160P-LED-Smart-TV-with-HDR-UN58TU7000/820835173"

accept = "text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9"

headers = {

"Accept": accept,

"Accept-Encoding": "gzip, deflate, br",

"Accept-Language": "en-US,en;q=0.9",

"Connection": "keep-alive",

"Referer": "https://www.google.com",

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36",

}

response = requests.get(TARGET_URL, headers=headers)

if "Robot or human" in response.text:

raise SystemExit("Blocked by Walmart — try rotating your User-Agent or headers.")

soup = BeautifulSoup(response.text, "html.parser")

product = {}

try:

product["name"] = soup.find("h1", {"itemprop": "name"}).text.strip()

except AttributeError:

product["name"] = None

try:

product["price"] = soup.find("span", {"itemprop": "price"}).text.replace("Now ", "").strip()

except AttributeError:

product["price"] = None

try:

product["rating"] = soup.find("span", {"class": "rating-number"}).text.strip("()")

except AttributeError:

product["rating"] = None

try:

next_data = soup.find("script", {"id": "__NEXT_DATA__"})

json_data = json.loads(next_data.string)

product["description"] = json_data["props"]["pageProps"]["initialData"]["data"]["product"]["shortDescription"]

except (AttributeError, KeyError):

product["description"] = None

print(product)

Limitation

The approach above works well for small-scale scraping. A few dozen or hundred pages. But once you start hitting Walmart at any meaningful volume, you’ll run into IP bans, rate limiting, and increasingly aggressive CAPTCHA challenges that headers alone won’t solve.

At scale, you need proxy rotation, automatic header cycling, and retry logic built into your pipeline. Building and maintaining that infrastructure yourself is time-consuming and brittle. Walmart actively updates its bot detection, so what works today may break tomorrow.

That’s where Scrapingdog’s Walmart Scraper API comes in. It handles proxy rotation, header management, retries, and JavaScript rendering automatically, so you can focus on the data rather than the infrastructure.

Scraping Walmart with Scrapingdog

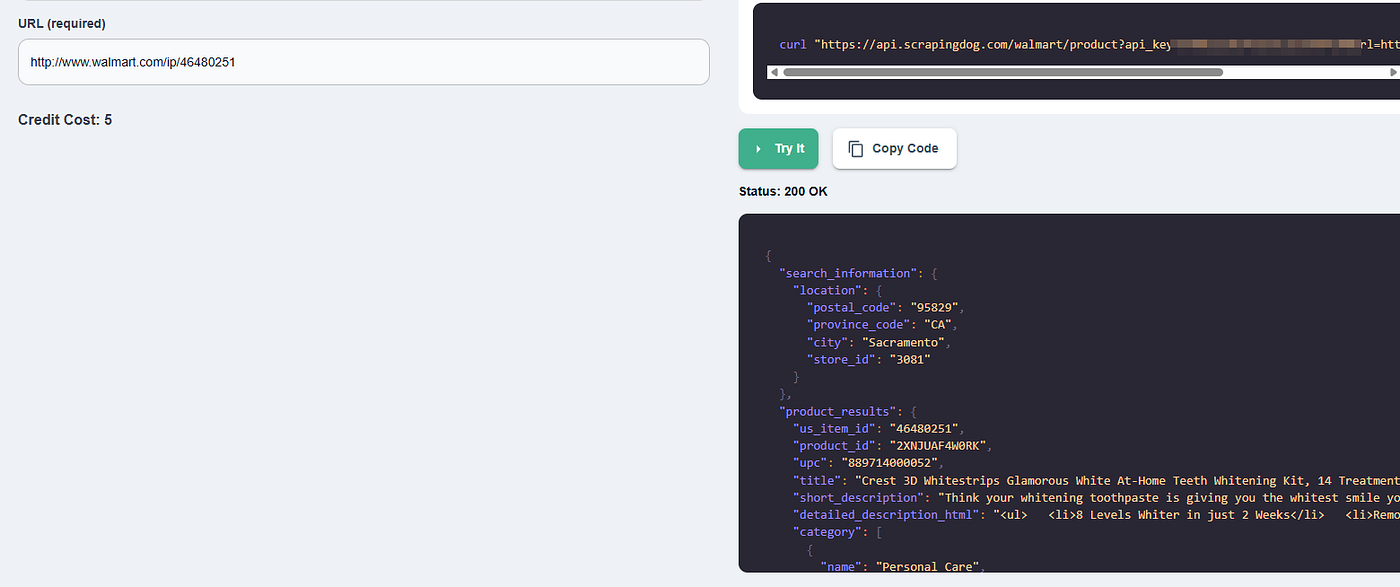

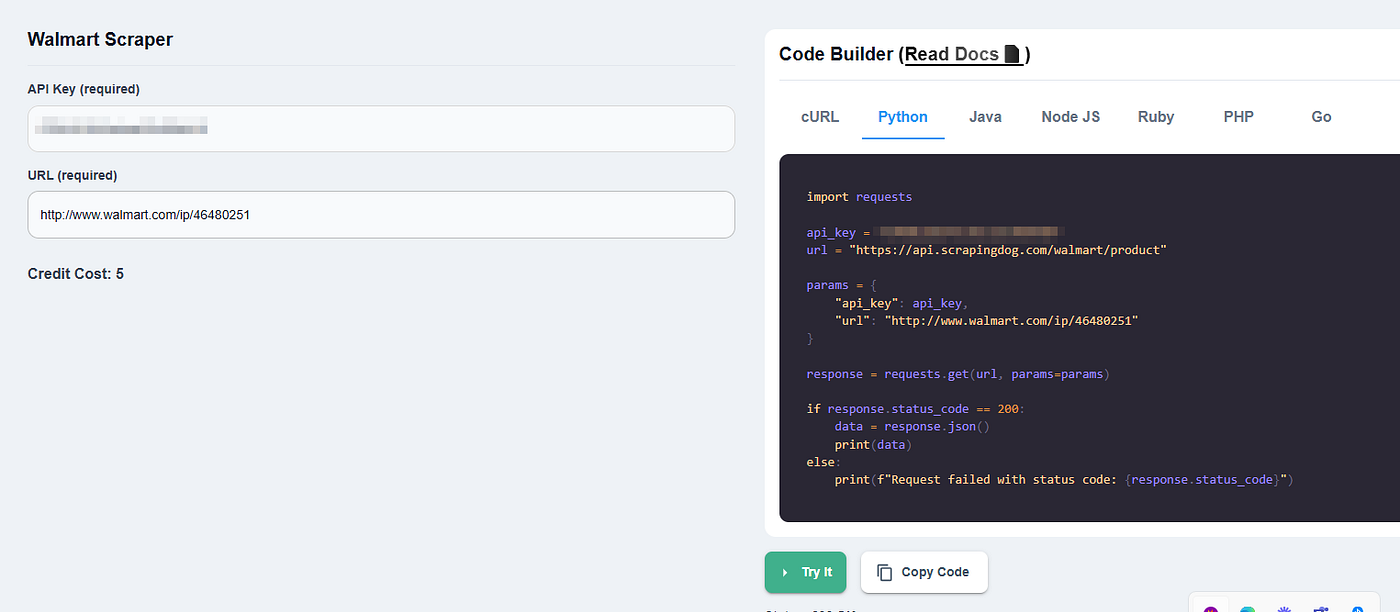

Scrapingdog’s Walmart Scraper API returns clean, structured JSON from any Walmart product page. No proxy management, no header tuning, no CAPTCHA handling on your end.

Once you sign up, you can test any Walmart URL directly from the dashboard before writing a single line of code.

The dashboard also generates ready-to-use Python code for any request, which you can copy directly into your project.

import requests

api_key = "Your-API-key"

url = "https://api.scrapingdog.com/walmart/product"

params = {

"api_key": api_key,

"url": "http://www.walmart.com/ip/46480251"

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Request failed with status code: {response.status_code}")

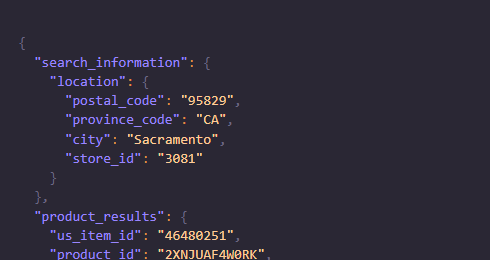

Remember to use your own API key in the above Python code. Once you run this code you will get beautiful parsed JSON data with all the valuable data from the Walmart product page.

I have also created a video tutorial on scraping Walmart at scale with Scrapingdog. Do check it out.

Here are Some Key Takeaways:

- Walmart product pages contain valuable data like price, ratings, availability, and reviews.

- Direct scraping often triggers anti-bot protections like CAPTCHA and IP blocks.

- Python (requests + BeautifulSoup) can extract basic product details.

- Walmart pages include dynamic and embedded JSON data that requires careful parsing.

- Using a scraper API simplifies scaling, proxy handling, and structured data extraction.

Conclusion

As we know Python is too great when it comes to web scraping. We just used two basic libraries to scrape Walmart product details and print results in JSON. But this process has certain limits and as we discussed above, Walmart will block you if you do not rotate proxies or change headers timely.

If you want to scrape thousands and millions of pages then using Scrapingdog will be the best approach.

I hope you like this little tutorial and if you do then please do not forget to share it with your friends and on social media.

Additional Resources

- Amazon Price Scraping using Python

- Building A Price Tracker for Amazon Products using Python

- Automating Amazon Price Tracking using Scrapingdog’s Amazon Scraper API & Make.com

- How to Scrape Flipkart Indian e-commerce Brand using Python

- How To Scrape Google Shopping using Python

- Web Scraping eBay Product Details using Python

- Web Scraping Myntra with Selenium & Python

- Web Scraping Yelp Reviews using Python

- Web Scraping Yellow Pages using Python

- What is User-Agent in Web Scraping & How To Use Them