TL;DR

- Two paths:

Node.js+Cheeriofor static pages;Puppeteerfor JS-rendered sites. - Demos: scrape Books to Scrape (product data) and a Facebook page (address, phone, email, rating).

- Covers

Node.jsevent loop / concurrency and why headless browsers help. - At scale, use Scrapingdog for rotating proxies, headless rendering, retries; 1,000 free credits.

JavaScript isn’t just for building websites. With Node.js, it’s one of the most powerful languages for web scraping, especially for dynamic, JavaScript-rendered pages that Python’s requests library simply can’t handle without extra overhead.

In this guide, we’ll walk you through two practical approaches to web scraping with JavaScript:

- Using Axios + Cheerio for fast, lightweight scraping of static pages

- Using Puppeteer for dynamic pages that load content only after JavaScript execution

By the end, you’ll have working scrapers for both scenarios, plus a clear understanding of when to use each approach and how to scale without getting blocked.

Let’s get started.

What You’ll Learn in This Blog

- How Node.js handles all of these tasks asynchronously through its event loop.

- How to scrape static pages using Axios + Cheerio

- How to scrape dynamic pages using Puppeteer

- How to reduce blocking with Scrapingdog

- Best practices for scraping at scale in Node.js

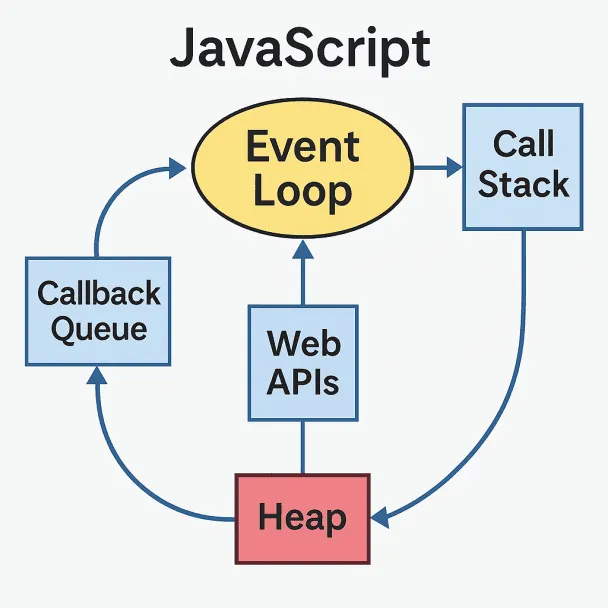

What is the Event Loop in JavaScript?

The event loop in JavaScript is a key component that allows Node.js to efficiently manage large-scale web scraping tasks.

Unlike languages like C or C++, which use multiple threads to perform tasks concurrently, JavaScript operates on a single-threaded model and handles tasks asynchronously through its event loop.

A key distinction in JavaScript is that it doesn’t run code in parallel but rather concurrently.

Confused?

Multiple tasks may start at the same time (like API calls or file reads), but JavaScript processes their results one at a time using the event loop.

It doesn’t execute all functions simultaneously, but it gives the illusion of multitasking by juggling asynchronous operations efficiently.

How does the Event Loop work?

Here’s a step-by-step procudure that happens: –

- The event loop continuously checks if the call stack is empty.

- If the call stack is empty, the event loop checks the event queue for pending events.

- If there are events in the queue, the event loop dequeues an event and executes its associated callback function.

- The callback function may perform asynchronous tasks, such as reading a file or making a network request.

- When the asynchronous task is completed, its callback function is placed in the event queue.

- The event loop continues to process events from the queue as long as events are waiting to be executed.

Prerequisites

Before we begin, make sure you have Node.js installed on your machine.

We’ll be using three libraries throughout this tutorial:

- Axios — A popular HTTP client for making requests to the target website.

- Cheerio — A fast, jQuery-like library for parsing and extracting data from HTML.

- Puppeteer — A Node.js library that controls a headless Chrome browser, essential for scraping pages that require JavaScript execution.

Before we install these libraries, let’s create a folder to keep our JavaScript files.

mkdir nodejs_tutorial

npm init

npm init will initialize a new Node.js Project. This command will create a package.json() file. Now, let’s install all of these libraries.

npm i axios puppeteer

This step will install all the libraries in your project. Create a .js file inside this folder by any name you like. I am using first_section.js.

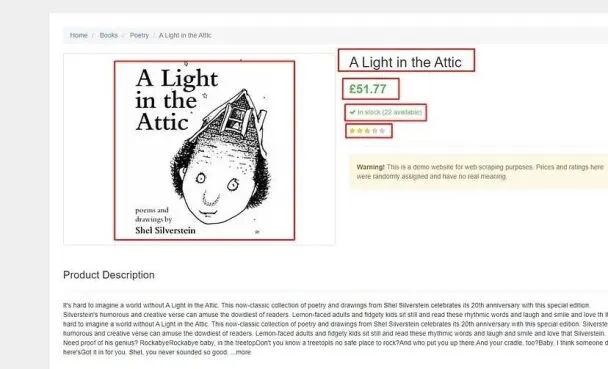

Now, we are all set to create a web scraper. So, for the first section, we are going to scrape this page from books.toscrape.com.

Part 1: Scraping Static Pages with Node.js and Cheerio

Our first job would be to make a GET request to our host website using unirest. Let’s test our setup first by making a GET request. If we get a response code of 200 then we can proceed ahead with parsing the data.

//first_section.js

const axios = require('axios');

const cheerio = require('cheerio');

async function scraper() {

let target_url = 'https://books.toscrape.com/catalogue/a-light-in-the-attic_1000/index.html';

let response = await axios.get(target_url);

return { status: response.status };

}

scraper().then((data) => {

console.log(data);

}).catch((err) => {

console.log(err);

});

The code is pretty simple, but let me explain you step-by-step.

- The

axiosmodule is imported to make HTTP requests and retrieve the HTML content of the target page. - The

cheeriomodule is imported to parse and manipulate the HTML using jQuery-like syntax. - The

scraperfunction isasync, allowing it to useawaitto pause execution until promises resolve. - Inside the function, the target URL is assigned to

target_url. axios.get()makes an HTTP GET request to that URL and returns a response object.- The HTML content of the page is available at

response.data. - The function returns an object containing the HTTP status code via

response.status.

Invoking the scraper function:

scraper()is called and.then()handles the resolved response, logging the result to the console.- Any errors thrown during execution are caught by

.catch()and logged to the console.

I hope you have got an idea of how this code is actually working. Now, let’s run this and see what status we get. You can run the code using the command below.

node first_section.js

When I run, I get a 200 status code.

{ status: 200 }

It means my code is ready, and I can proceed with the parsing process.

What are we going to scrape?

Before writing any parsing logic, it’s always a good idea to decide exactly what data you want to extract from the target page.

We’ll be extracting five data elements from this page:

- Product Title

- Product Price

- Stock Availability & Quantity

- Product Rating

- Product Image

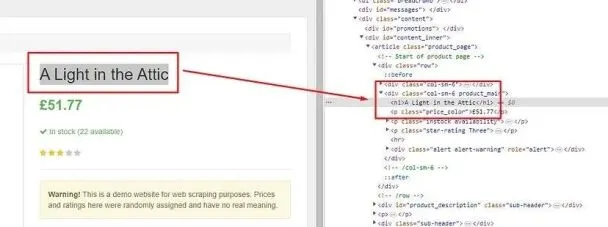

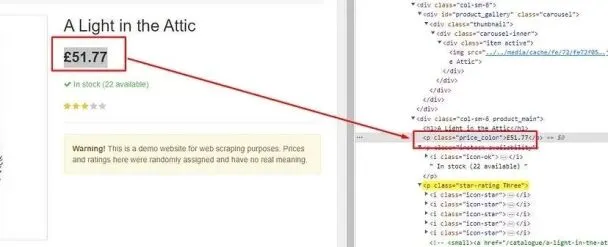

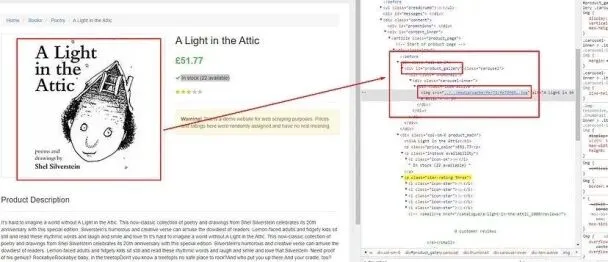

Before making any requests, it’s worth inspecting the page structure to locate each element in the DOM. Simply right-click on any element and select Inspect to open Chrome DevTools. This tells you exactly what selectors to target with Cheerio.

Once the page HTML is downloaded via axios.get(), we’ll use Cheerio’s .find() method to locate each element and extract its value.

Identifying the location of each element

Let’s start by searching for the product title.

The title is stored inside h1 tag. So, this can be easily extracted using Cheerio.

const $ = cheerio.load(data.body);

obj["title"]=$('h1').text()

- The

cheerio.load()function is called, passing indata.bodyas the HTML content to be loaded. - This creates a Cheerio instance, conventionally assigned to the variable

$, which represents the loaded HTML and allows us to query and manipulate it using familiar jQuery-like syntax. - HTML structure has an

<h1>element, the code uses$('h1')to select all<h1>elements in the loaded HTML. But in our case, there is only one. .text()is then called on the selected elements to extract the text content of the first<h1>element found.- The extracted title is assigned to the

obj["title"]property.

Find the price tag of the product.

This price data can be found inside the p tag with class name price_color.

obj[“price_of_product”]=$(‘p.price_color’).text().trim()

Finding the stock data tag

Stock data can be found inside p tag with the class name instock.

obj[“stock_data”]=$(‘p.instock’).text().trim()

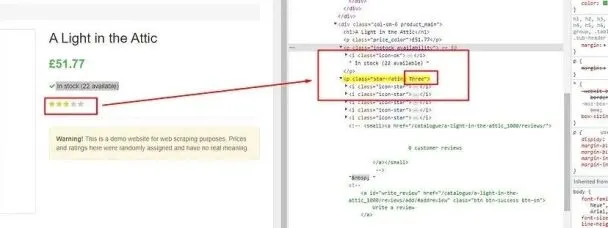

Finding the star rating tag

Here, the star rating is the name of the class. So, we will first find this class by the name star-rating, and then we will find the value of this class attribute using .attr() function provided by the cheerio.

obj[“rating”]=$(‘p.star-rating’).attr(‘class’).split(“ “)[1]

Finding the image tag

The image is stored inside an img tag, which is located inside the div tag with id product_gallery.

obj[“image”]=”https://books.toscrape.com"+$('div#product_gallery').find('img').attr('src').replace("../..","")

By adding “https://books.toscrape.com” as a pretext, we are completing the URL.

Everything is ready; now, let’s run the code and see what data we get.

As you can see, we were able to extract all the data we were looking for from the target website using unirest and cheerio.

Complete Code

You can extract many other things from the page, but my main motive was to show you how the combination of any HTTP agent(Unirest, Axios, etc) and Cheerio can make web scraping super simple.

The code will look like this.

const axios = require('axios');

const cheerio = require('cheerio');

async function scraper() {

let obj = {};

let arr = [];

let target_url = 'https://books.toscrape.com/catalogue/a-light-in-the-attic_1000/index.html';

let response = await axios.get(target_url);

const $ = cheerio.load(response.data);

obj["title"] = $('h1').text();

obj["price_of_product"] = $('p.price_color').text().trim();

obj["stock_data"] = $('p.instock').text().trim();

obj["rating"] = $('p.star-rating').attr('class').split(" ")[1];

obj["image"] = "https://books.toscrape.com" + $('div#product_gallery').find('img').attr('src').replace("../..", "");

arr.push(obj);

return { status: response.status, data: arr };

}

scraper().then((data) => {

console.log(data.data);

}).catch((err) => {

console.log(err);

});

Part 2: Scraping Dynamic Pages with Node.js and Puppeteer

What is Puppeteer?

Puppeteer is a Node.js library developed by Google that provides a high-level API to control headless versions of the Chrome or Chromium web browsers.

It is widely used for automating and interacting with web pages, making it a popular choice for web scraping, automated testing, browser automation, and other web-related tasks.

Why do we need a headless browser to scrape a website?

- Rendering JavaScript– Many modern websites rely heavily on JavaScript to load and display content dynamically. Traditional web scrapers may not execute JavaScript, resulting in incomplete or inaccurate data extraction. Headless browsers can fully render and execute JavaScript, ensuring that the scraped data reflects what a human user would see when visiting the site.

- Handling User Interactions– Some websites require user interactions, such as clicking buttons, filling out forms, or scrolling, to access the data of interest. Headless browsers can automate these interactions, enabling you to programmatically navigate and interact with web pages as needed.

- CAPTCHAs and Bot Detection– Many websites employ CAPTCHAs and anti-bot mechanisms to prevent automated scraping. Headless browsers can be used to solve CAPTCHAs and mimic human-like behavior, helping you bypass bot detection measures.

- Screenshots and PDF Generation– Headless browsers can capture screenshots or generate PDFs of web pages, which can be valuable for archiving or documenting web content. You can read more about generating PDFs with a headless chrome browser here.

How does it work?

1. Installation:

- Start by installing Puppeteer in your Node.js project using npm or yarn.

- You can do this with the following command:

npm install puppeteer

2. Import Puppeteer:

- In your Node.js script, import the Puppeteer library by requiring it at the beginning of your script.

const puppeteer = require('puppeteer');

3. Launching a Headless Browser:

- Use Puppeteer’s

puppeteer.launch()method to start a headless Chrome or Chromium browser instance. - Headless means that the browser runs without a graphical user interface (GUI), making it more suitable for automated tasks.

- You can customize browser options during the launch, such as specifying the executable path or starting with a clean user profile.

4. Creating a New Page:

- After launching the browser, you can create a new page using the

browser.newPage()method. - This page object represents the tab or window in the browser where actions will be performed.

5. Navigating to a Web Page:

- Use the

page.goto()method to navigate to a specific URL. - Puppeteer will load the web page and wait for it to be fully loaded before proceeding.

6. Interacting with the Page:

- Puppeteer allows you to interact with the loaded web page, including:

- Clicking on elements.

- Typing text into input fields.

- Extracting data from the page’s DOM (Document Object Model).

- Taking screenshots.

- Generating PDFs.

- Evaluating JavaScript code on the page.

- These interactions can be scripted to perform a wide range of actions.

7. Handling Events:

- You can listen for various events on the page, such as network requests, responses, console messages, and more.

- This allows you to capture and handle events as needed during your automation.

8. Closing the Browser:

- When your tasks are complete, you should close the browser using

browser.close()to free up system resources. - Alternatively, you can keep the browser open for multiple operations if needed.

9. Error Handling:

- It’s important to implement error handling in your Puppeteer scripts to gracefully handle any unexpected issues.

- This includes handling exceptions, network errors, and timeouts.

I think this much information is enough for now on Puppeteer and I know you are eager to build a web scraper using Puppeteer. Let’s build a scraper.

Collect dynamic data with Puppeteer

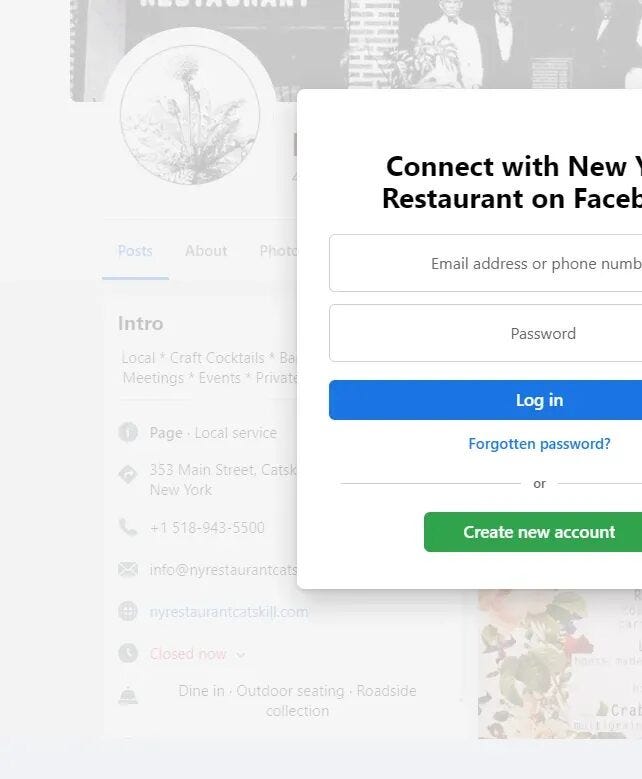

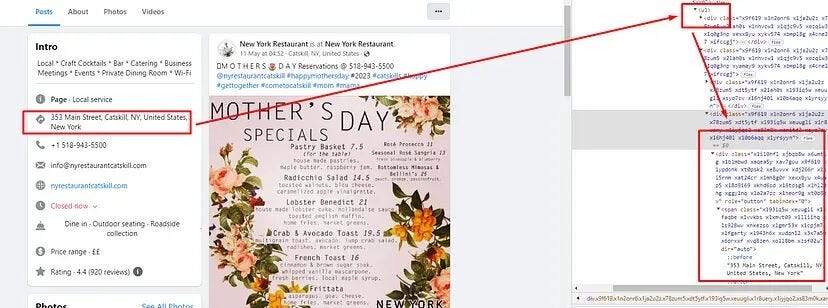

We have selected Facebook because it loads its data through JavaScript execution. We are going to scrape this page using Puppeteer. The page looks like this.

As usual, we should test our setup before starting with the scraping and parsing process.

Downloading the raw data

We will write a code that will open the browser and then open the Facebook page that we want to scrape. Then, it will close the browser once the page is loaded completely.

const puppeteer = require('puppeteer');

const cheerio = require('cheerio');

async function scraper(){

let obj={}

let arr=[]

let target_url = 'https://www.facebook.com/nyrestaurantcatskill/'

const browser = await puppeteer.launch({headless:false});

const page = await browser.newPage();

await page.setViewport({ width: 1280, height: 800 });

let crop = await page.goto(target_url, {waitUntil: 'domcontentloaded'});

let data = await page.content();

await page.waitFor(2000)

await browser.close();

return {status:crop.status(),data:data}

}

scraper().then((data) => {

console.log(data.data)

}).catch((err) => {

console.log(err)

})

Let’s break down the code step by step:

scraperis declared as anasyncfunction, allowing it to useawaitto pause execution until each asynchronous operation completes.puppeteer.launch({ headless: false })launches a Chrome browser instance. Settingheadless: falseopens a visible browser window, which is useful for Puppeteer debugging. Switch it totruefor production to run silently in the background.browser.newPage()opens a new browser tab and assigns it to thepagevariable.page.setViewport({ width: 1280, height: 800 })sets the browser window size, simulating a standard desktop screen.page.goto(target_url, { waitUntil: 'domcontentloaded' })navigates to the target URL and waits until the DOM is fully loaded before proceeding.page.waitForTimeout(2000)pauses execution for 2 seconds to allow any additional dynamic content to finish rendering.page.content()retrieves the fully rendered HTML of the page as a string.browser.close()shuts down the browser instance.- The function returns an object containing the HTTP

statuscode and the scrapeddata.

Once you run this code you should see this.

If you see this, then your setup is ready and we can proceed with data parsing using Cheerio.

What are we going to scrape?

We are going to scrape these five data elements from the page.

- Address

- Phone number

- Email address

- Email Deliverability

- Website

- Rating

Now, as usual, we are going to first analyze their location inside the DOM. We will take support of Chrome dev tools for this. Then using Cheerio We are going to parse each of them.

Identifying the location of each element

Let’s start with the address first and find out its location.

Once you inspect it, you will find that all the information we want to scrape is stored inside this div tag with the class name xieb3on. And then, inside this div tag, we have two more div tags out of which we are interested in the second one because the information is inside that.

Let’s find this out first.

$('div.xieb3on').first().find('div.x1swvt13').each((i,el) => {

if(i===1){

}

})

We have set a condition that only if it is 1 it will go inside the condition. With this, we have cleared our intention that we are only interested in the second div block. Now, the question is how to extract an address from this. Well, it has become very easy now.

The address can be found inside the first div tag with the class x1heor9g. This div tag is inside the ul tag.

$('div.xieb3on').first().find('div.x1swvt13').each((i,el) => {

if(i===1){

obj["address"] = $(el).find('ul').find('div.x1heor9g').first().text().trim()

}

})

Let’s find the email, website, phone number, and the rating

All four elements, phone number, rating, email, and website, are stored inside div tags with the class xu06os2, all nested within the same ul tag as the address. Since they share the same class, we can’t target them individually by selector. Instead, we iterate over each div.xu06os2 and use the value’s content to determine what type of data it is + prefix for phone numbers, @ for emails, Rating for ratings, and .com for websites.

$(el).find('ul').find('div.xu06os2').each((o, p) => {

const value = $(p).text().trim();

if (value.includes("+")) {

obj["phone"] = value;

} else if (value.includes("Rating")) {

obj["rating"] = value;

} else if (value.includes("@")) {

obj["email"] = value;

} else if (value.includes(".com")) {

obj["website"] = value;

}

});

arr.push(obj);

obj = {};

.find('div.xu06os2')selects all<div>elements with the classxu06os2nested inside the previously selected<ul>.

2. .each((o, p) => { ... }) iterates over each matched<div>, executing the callback for every element.

3. $(p).text().trim() extracts and cleans the text content of the current <div>, storing it in value.

4. Since all four elements share the same class, we use .includes() to identify each one by its content:

"+"indicates a phone number → assigned toobj["phone"]"Rating"indicates a rating → assigned toobj["rating"]"@"indicates an email address → assigned toobj["email"]".com"indicates a website URL → assigned toobj["website"]arr.push(obj)appends the populated object to thearrarray.obj = {}resets the object for the next iteration.

Complete Code

Let’s take a look at the complete code along with the response it returns after execution.

const puppeteer = require('puppeteer');

const cheerio = require('cheerio');

async function scraper() {

let obj = {};

let arr = [];

let target_url = 'https://www.facebook.com/nyrestaurantcatskill/';

const browser = await puppeteer.launch({ headless: false });

const page = await browser.newPage();

await page.setViewport({ width: 1280, height: 800 });

const response = await page.goto(target_url, { waitUntil: 'domcontentloaded' });

await page.waitForTimeout(2000);

let data = await page.content();

await browser.close();

const $ = cheerio.load(data);

$('div').each((i, el) => {

$(el).find('ul').find('div.xu06os2').each((o, p) => {

const value = $(p).text().trim();

if (value.includes("+")) {

obj["phone"] = value;

} else if (value.includes("Rating")) {

obj["rating"] = value;

} else if (value.includes("@")) {

obj["email"] = value;

} else if (value.includes(".com")) {

obj["website"] = value;

}

});

arr.push(obj);

obj = {};

});

return { status: response.status(), data: arr };

}

scraper().then((data) => {

console.log(data.data);

}).catch((err) => {

console.log(err);

});

Let’s run it and see the response.

We successfully scraped a dynamic website using JavaScript rendering through Puppeteer. If you’re looking to deepen your understanding of Puppeteer, don’t miss our detailed guide on web scraping with Puppeteer, a powerful tool for handling JavaScript-heavy sites.

Scraping without getting blocked with Scrapingdog

Scrapingdog is a web scraping API that uses a new proxy on every new request. Once you start scraping any website at scale, you will face two challenges.

- Puppeteer will consume too much CPU. Your machine will get super slow.

- Your IP will be banned in no time.

With Scrapingdog, you can resolve both issues very easily. It uses headless Chrome browsers to render websites, and every request goes through a new IP.

const cheerio = require('cheerio');

const unirest = require('unirest');

async function scraper(){

let obj={}

let arr=[]

let api_key='your-api-key'

let target_url = 'https://www.facebook.com/nyrestaurantcatskill/'

let data = await unirest.get(`https://api.scrapingdog.com/scrape?api_key=${api_key}&url=${target_url}`)

const $ = cheerio.load(data)

$('div.xieb3on').first().find('div.x1swvt13').each((i,el) => {

if(i===1){

obj["address"] = $(el).find('ul').find('div.x1heor9g').first().text().trim()

$(el).find('ul').find('div.xu06os2').each((o,p) => {

let value = $(p).text().trim()

if(value.includes("+")){

obj["phone"]=value

}else if(value.includes("Rating")){

obj["rating"]=value

}else if(value.includes("@")){

obj["email"]=value

}else if(value.includes(".com")){

obj["website"]=value

}

})

arr.push(obj)

obj={}

}

})

return {data:arr}

}

scraper().then((data) => {

console.log(data.data)

}).catch((err) => {

console.log(err)

})

As you can see, you just have to make a simple GET request, and your job is done. Scrapingdog will handle everything from headless chrome to retries for you.

You can start with free 1000 credits to try how Scrapingdog works for your use case.

Why You Should Prefer Javascript for Web Scraping?

JavaScript, and consequently Node.js, is designed to be non-blocking and asynchronous.

In the web scraping context, this means that Node.js can initiate tasks (such as making HTTP requests) and continue executing other code without waiting for those tasks to complete.

This non-blocking nature allows Node.js to efficiently manage multiple operations concurrently.

Node.js uses an event-driven architecture. When an asynchronous operation, such as a network request or file read, is completed, it triggers an event.

The event loop (discussed in next section) listens for these events and dispatches callback functions to handle them. This event-driven model ensures that Node.js can manage multiple tasks simultaneously without getting blocked.

With its event-driven and non-blocking architecture, Node.js can easily handle concurrency in web scraping.

It can initiate multiple HTTP requests to different websites concurrently, manage the responses as they arrive, and process them as needed.

This concurrency is essential for scraping large volumes of data efficiently.

5 Key Takeaways from JavaScript Web Scraping

- The article shows two main ways to scrape with JavaScript using simple HTTP requests + Cheerio for static pages, and Puppeteer for sites that need JavaScript rendering.

- It walks through a step-by-step example of extracting product data (like title, price, stock) from a sample site using Node.js code.

- Puppeteer is explained as a headless browser tool that runs JavaScript, interacts with pages, and helps when normal requests don’t show dynamic content.

- There are clear sections on project setup, required libraries (Unirest, Cheerio, Puppeteer), and parsing HTML with Cheerio.

- For larger-scale scraping, the blog highlights why APIs are useful to handle proxies, rendering, and blocking without extra infrastructure.

Conclusion

JavaScript is a powerful language for web scraping, and this tutorial proves it. While you might need to dig into some basics to get started, it offers speed and reliability once you do.

In this tutorial, we explored how JavaScript can be used for scraping both static and dynamic websites. When dealing with dynamic content, web scraping with Playwright is a powerful alternative to Puppeteer, offering great flexibility and reliability.

I just wanted to give you an idea of how Javascript can be used for web scraping, and I hope with this tutorial you will get a little clarity.